As you might’ve seen, Cormac Hogan just posted about an UNMAP fix that was just released. This is a fix I have been eagerly awaiting for some time, so I am very happy to see it released. And thankfully it does not disappoint.

First off, some official information:

Release notes:

https://kb.vmware.com/kb/2148989

Manual patch download:

https://my.vmware.com/group/vmware/patch#search

Or you can run esxcli if you ESXi host has internet access to download and install automatically:

esxcli software profile update -p ESXi-6.5.0-20170304001-standard -d https://hostupdate.vmware.com/software/VUM/PRODUCTION/main/vmw-depot-index.xml

Note, don’t forget to open a hole in the firewall first for this download. Of course, any time you open your firewall you create a security vulnerability, so if this is too much of a risk, go with the manual download method here:

https://my.vmware.com/group/vmware/patch#search

esxcli network firewall ruleset set -e true -r httpClient

Then close it again:

esxcli network firewall ruleset set -e false -r httpClient

The problem

This is about in-guest UNMAP, which I have blogged about quite a bit in the past:

- What’s new in ESXi 6.5 Storage Part IV: In-Guest UNMAP CBT Support

- (Windows) Direct Guest OS UNMAP in vSphere 6.0

- (Linux) What’s new in ESXi 6.5 Storage Part I: UNMAP

So the problem that was solved by this patch was the issue that guest OSes were not sending UNMAP commands down to ESXi that were aligned. ESXi requires that these commands be aligned to 1 MB boundaries and the vast majority of UNMAP commands that were sent down were simply not aligned. Therefore, every UNMAP would fail and no space would be reclaimed. Even if “part” of it was aligned. There were a variety of ways to work around this, such as in Windows:

Allocation Unit Size and Automatic Windows In-Guest UNMAP on VMware

Changing the allocation unit size in NTFS helped make UNMAPs much more likely to be aligned, but not always.

Linux fstrim command was almost completely useless though because of the alignment issue and not much could be done about that–luckily though the discard option in Linux generally issued aligned UNMAPs, so it didn’t matter as much. Let’s take a look a Windows in this post. I will post soon about Linux.

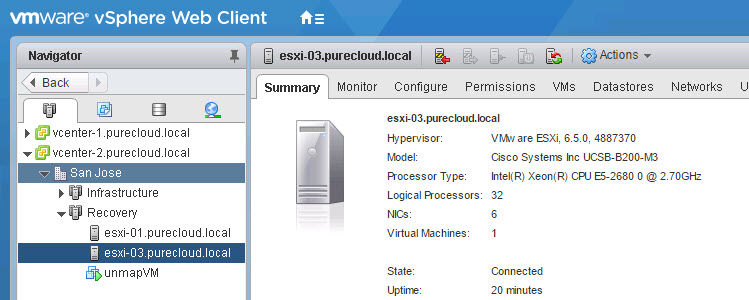

Testing with Windows 2012 R2

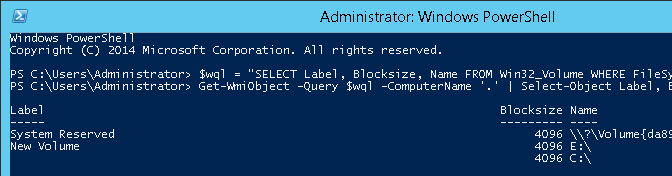

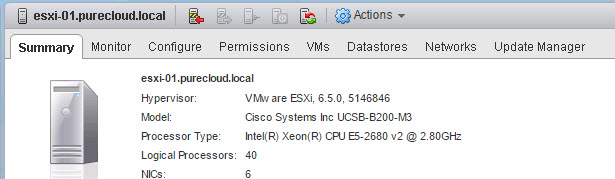

Any Windows release Windows 2012 R2 and later supports UNMAP, but they all suffer from this granularity issue. So let’s do a quick test. Using Windows 2012 R2 on the original release of ESXi 6.5 (without the patch).

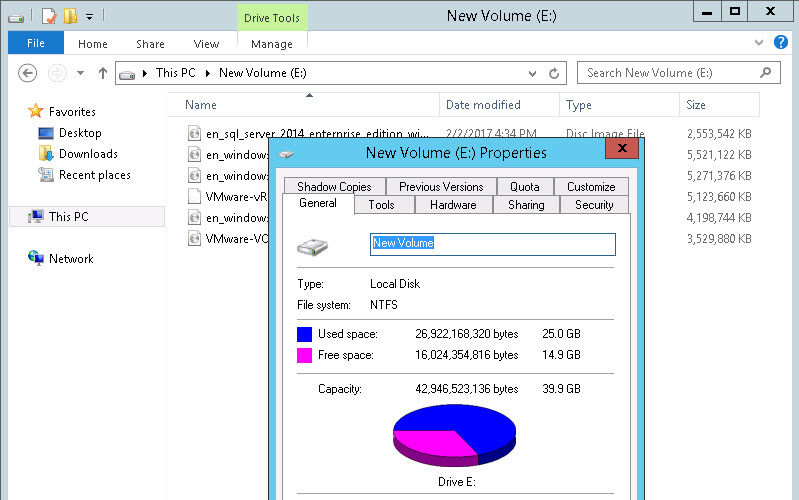

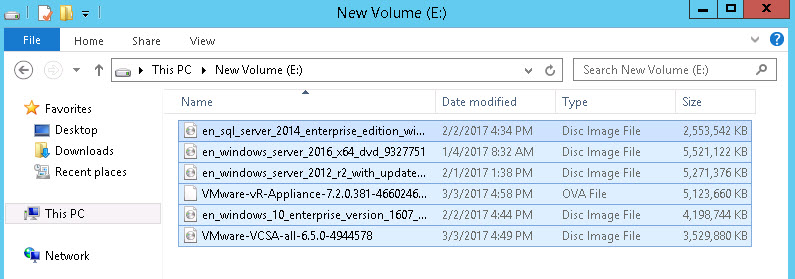

I have a VM with a thin virtual disk on a FlashArray VMFS datastore and I put a bunch of ISOs on it. About 25 GB worth.

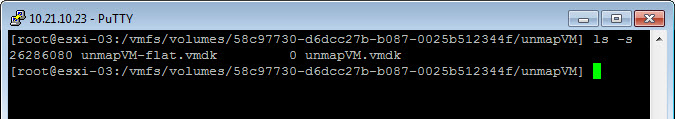

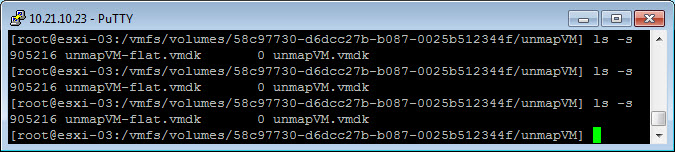

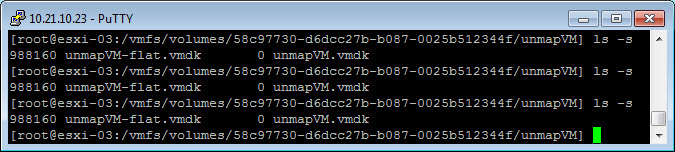

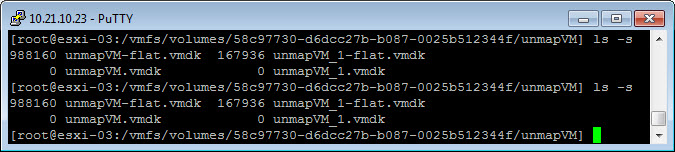

We can see my virtual disk is the same size (note the file unmapVM-flat.vmdk):

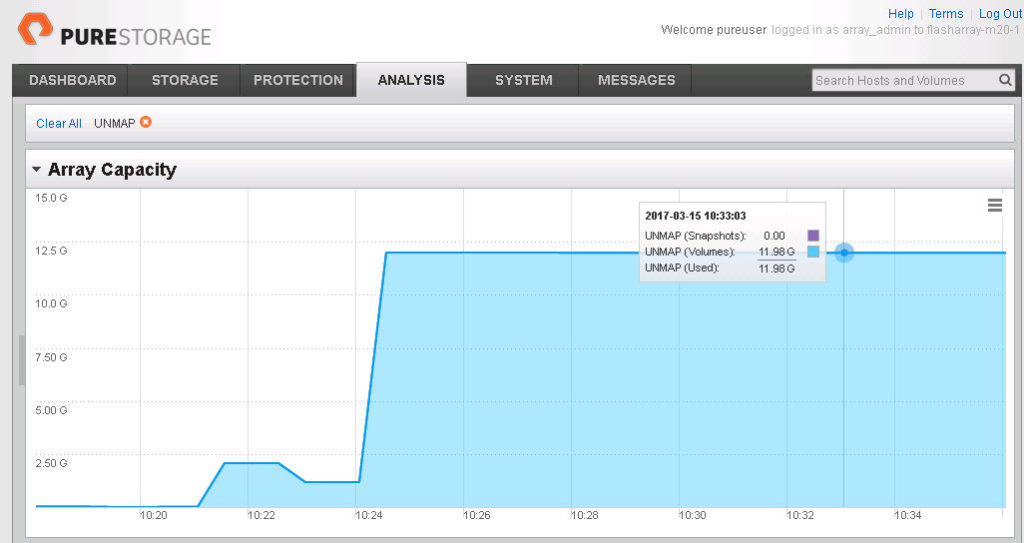

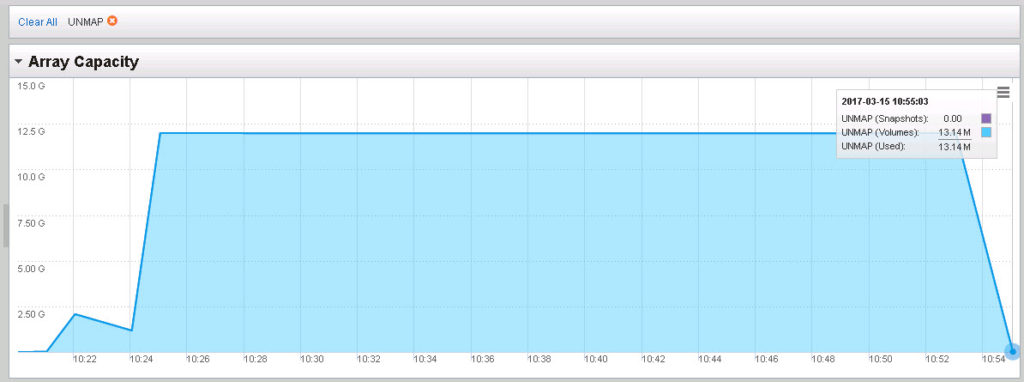

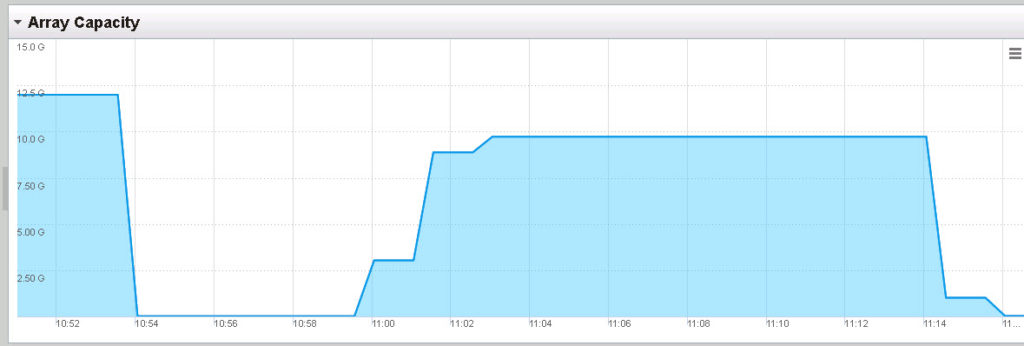

And the volume on the FlashArray reports about 12 GB (due to data reduction it is less than 25):

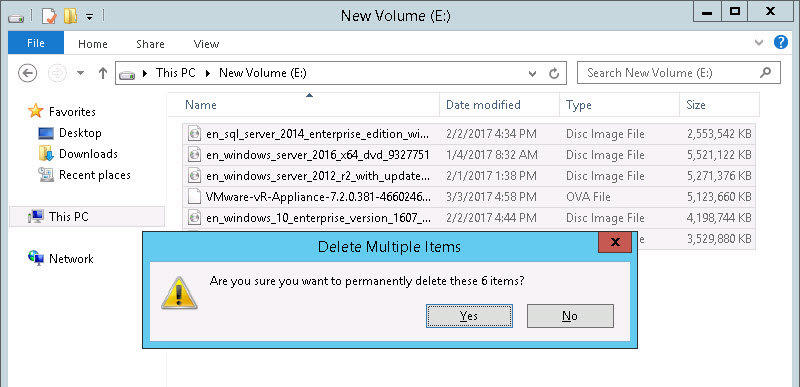

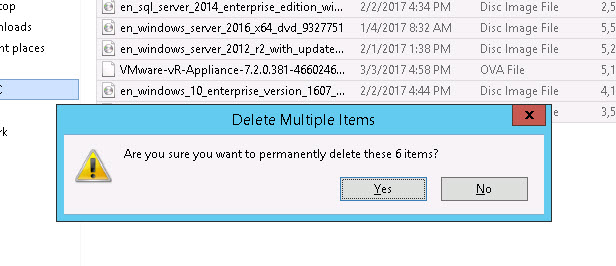

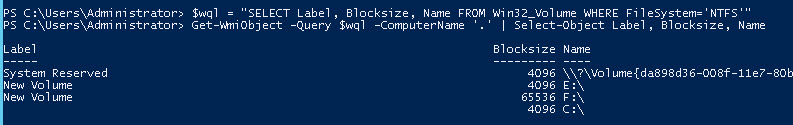

So now I will permanently delete the files. When a file is permanently deleted (or deleted from the recycling bin) NTFS is configured automatically to issue UNMAP. The problem here is that my allocation unit size is 4 K which means that most UNMAPs will be misaligned and nothing is going to get reclaimed.

So the delete:

After waiting a few minutes we can see nothing has happened. The virtual disk has not shrunk from UNMAPs and the FlashArray reflects no capacity returned.

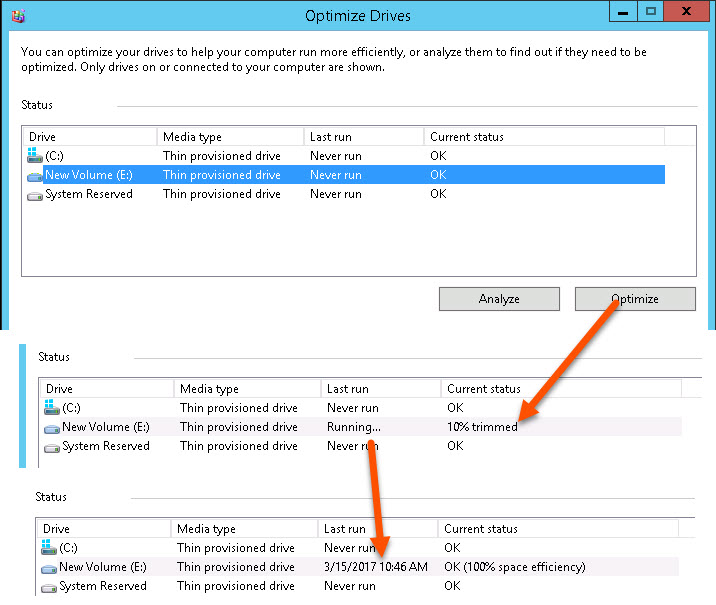

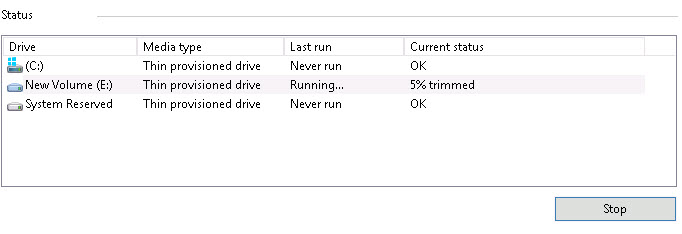

To be sure, let’s try the disk optimize operation. This allows you to manually execute UNMAP against a volume.

Still nothing happens.

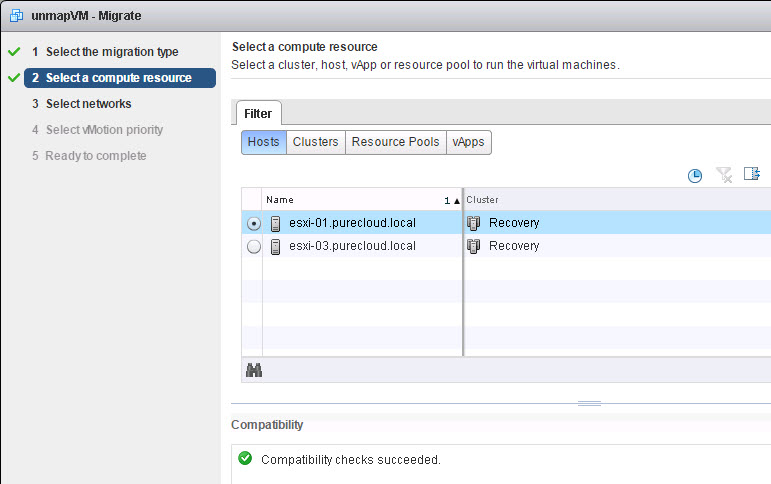

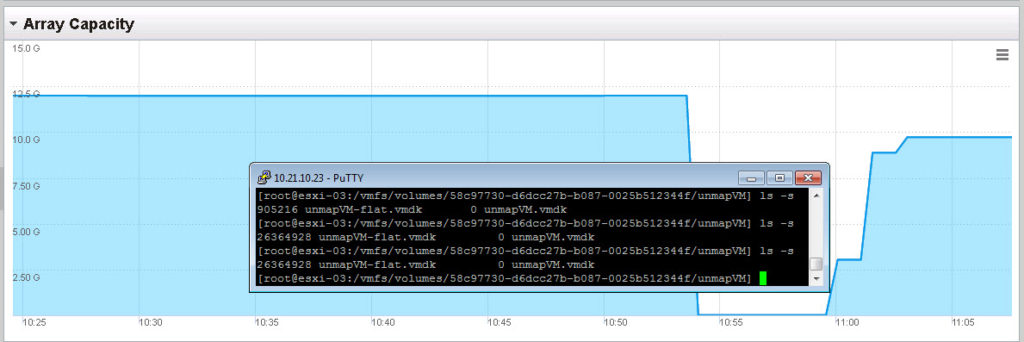

Okay, let’s vMotion this from my un-patched ESXi host to my patched ESXi host.

The vMotion, with no other reconfiguration other than moving the VM to this host:

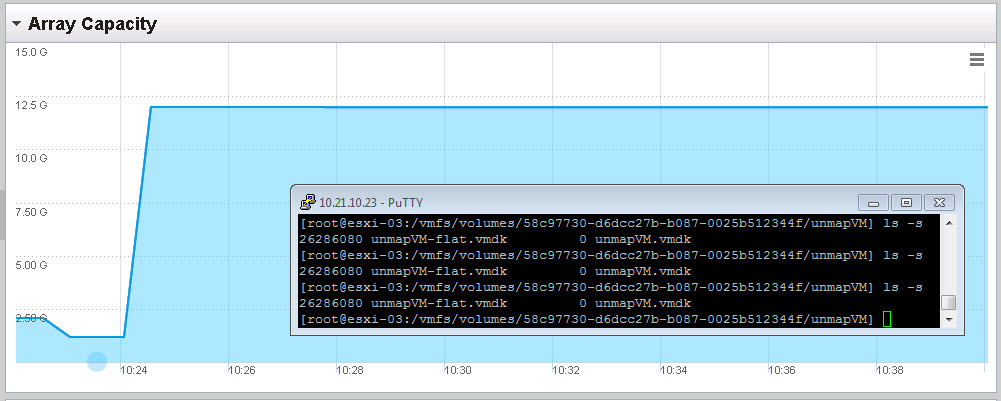

Now we can run the optimize again with it on the patched host:

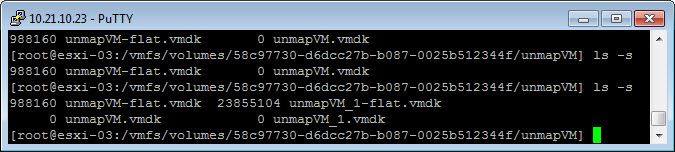

Wait a minute or two and we now see the virtual disk has shrunk:

Now it is not down to zero, it is about 900 MB, but certainly smaller than 25 GB. The reason for this is that certain amount of UNMAPs were misaligned, so ESXi did not reclaim them. Instead, ESXi just zeroes that part out. But if we look at the FlashArray, we see ALL of the space was reclaimed:

Sweet!! So why did the FlashArray reclaim it all and ESXi didn’t? Well because ESXi still wrote zeroes to the LBAs that were identified by the misaligned UNMAPs. The FlashArray discards contiguous zeros and removes any data that used to exist where the zeros were written. Which makes writing zeros to the FlashArray identical in result to running UNMAP. So this works quite nicely on the FlashArray or any array for that matter that removes zeroes on the fly.

So let’s test this now with automatic UNMAP in NTFS. I will keep my VM on the patched host and put some files back on the NTFS volume, so it is 25 GB again.

We see on our VMFS the space usage is back up and so is it on the FlashArray:

I will delete the files permanently again, but this time rely on automatic UNMAP in NTFS to reclaim the space, instead of using Disk Optimizer.

We can see the virtual disk is now shrunk (takes about 30 seconds or so)

And we can see the FlashArray is fully reclaimed:

Does Allocation Unit Size Matter Now?

So do I need to change my allocation unit size now? You certainly do not have to. But there is still some benefit from the VMware side from doing so. This fix allows you to be 100% efficient from the underlying storage side regardless, but if you want the VMDK to be shrunk as much as possible, 32 K or 64 K will get you as close as possible to fully shrunk as more UNMAPs will be aligned.

So if I add another thin VMDK and put 25 GB on it you see that reflected in the filesystem. Notice my original, reclaimed virtual disk is 900 MB after reclaim.

The original VMDK has a 4 K allocation unit size and my new one uses 64 K:

Now if I permanently delete the files, we see my virtual disk has shrunk a lot more than my 4 K NTFS formatted VMDK:

988 MB compared to 168 MB. A decent difference. So it might be still advisable to do this in general, to make VMFS usage as accurate as possible. But I will re-iterate, from a physical storage perspective, it does not matter what you use as an allocation format–all of it will be reclaimed on the FlashArray. There is just a logical/VMFS allocation benefit to 64 K.

Zeroing Behavior

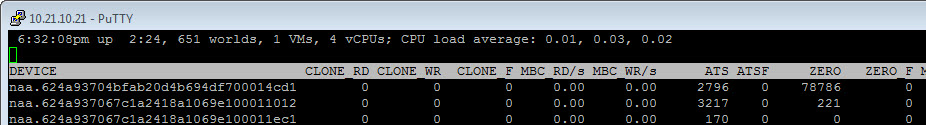

For those who are curious, what does ESXi do during this shrunk? As I can see, it does the following:

- Receives the UNMAPs

- Shrinks the virtual disk

- Issues WRITE SAME to zero out the misaligned segments

This can be proven out with esxtop. Looking at the VAAI counters, specifically, ATS and WRITESAME (ATS and ZERO). We can see for the specific device (in this case naa.624a93704bfab20d4b694df700014cd1) the ATS counters first go up, then the zero ones do. This to me means that the virtual disk was shrunk first, and a filesystem allocation change like that will invoke ATS operations. Then we see WRITE SAME commands come down, which is zeroing out the blocks referred to by the misaligned UNMAPs.

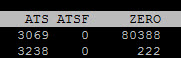

After:

You can see both counters for that device have gone up. If you watch it live, you can see the order.

Lastly, can I manually remove the zeros that have been WRITESAME’ed to the virtual disk. The answer is yes, but it is an offline procedure. So you either need to power-down the virtual machine, or remove the virtual disk temporarily from the VM.

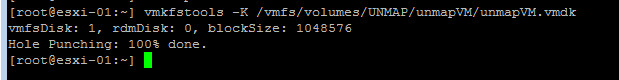

The procedure to do this is called “punching zeros” and can be done with the vmkfstools command:

vmkfstools -K /vmfs/volumes/UNMAP/unmapVM/unmapVM.vmdk

So if we take our virtual disks again and I remove the first one which is currently 988 MB:

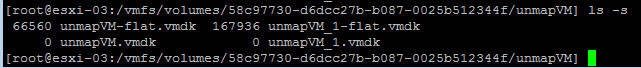

Then we run punch zero:

And now the virtual disk is down to 66 MB! It is offline, which isn’t great, but at least it is an option.

Final Notes

Now if you do not see anything on the FlashArray, this means you did not enabled the ESXi option “EnableBlockDelete” which is disabled by default. This is what allows ESXi to translate the UNMAPs down to the array level.

Because of everything above, I highly recommend applying this patch ASAP.

Hi Cody,

Does this now work with CBT enabled as well?

Thanks

Yep! See this post https://www.codyhosterman.com/2016/12/whats-new-in-esxi-6-5-storage-part-iv-in-guest-unmap-cbt-support/

I should elaborate in that I did test this patch with CBT on and off, so this fix does not break the CBT support they introduced in 6.5

Hi Cody. Just a short comment: with the 4K NTFS block size, you can go down to around 90MB just issuing an unmap command from the ESXi host, esxcli storage vmfs unmap, instead of offline punch operation.

Thank for the post!

You’re welcome! Can you clarify where the 90 MB is reported from? Are you talking about on the array/volume or the virtual disk size? esxcli storage vmfs unmap should only reclaim space on the array volume not shrink virtual disks, so if that is what you mean I would definitely be interested in hearing more! Thanks

Hi Cody. Well I’ve started from the scratch and here are the results:

– created a VMFS virtual disk, filled it up to 18GB ,

– permanently deleted the files, now the sise of the virtual disk is about 676MB,

– issued the optimize command from the guest Windows (not the unmap, sorry for misleading),

– ls -s shows 91MB on the virtual disk.

Cool!

–

Ah okay, that makes sense. Results might vary to how much gets reclaimed, depending on the initial file locations/size etc, which can affect alignment. So running optimize after the fact may or may not help as it does seem to be somewhat better at times at alignment. I will do some more testing to see if it should be a routine behavior in addition to automatic. Thank you!

I’m getting “Optimization not available” on all my Windows servers (2012/2016). Also, it lists them as “solid state disk” rather than “thin provisioned disk” like your screenshots…

Any ideas why it would show up that way?

This usually means that the virtual disk is not of type “thin”. Can you verify that they are not thick? Also if the virtual machine hardware is old it will not work. What version of ESXi are you running?

Yes, always use Thin. But I do also have CBT enabled (for Veeam Backup). I’m probably also not on the latest 6.5, but I figured it should still show up as “thin provisioned” in Windows 2016.

By the way, do you have an article about CBT? How is it related to UNMAP?

Ah that would be it then. CBT blocks this from working in vSphere 6.0. This only works in 6.5: https://www.codyhosterman.com/2016/12/whats-new-in-esxi-6-5-storage-part-iv-in-guest-unmap-cbt-support/

So after upgrading to 6.5, does it just automatically reclaim all the space? Or does it only start doing it from that point forward?

And wouldn’t a storage vmotion re-write the vmdk file without all the wasted space?

Not really. NTFS UNMAP is only invoked when the file is deleted, if it fails it is not retried (linux with the discard option behaves the same). Disk Optimizer can be set to run on a schedule and that will reclaim after the fact. But a vMotion to a new host won’t cause that to happen. Nor a storage vmotion. When an UNMAP is issued and accepted by VMware, the virtual disk will shrink, so a subsequent SvMotion will not copy dead data, but only if UNMAP has been successfully invoked.

Hi Cody, It was a great post.

I was wondering if there is a feature which we can use when we delete data in “thick provisioned” disks and same can be claimed at array level.

We use Pure storage and here is the way which we currently follow for thick provisioned disks: when we do delete some data in VMs, we use a third party tool “Perfect Storage” in order to zero out the disk space so that array can recognize the zero bit and space can be claimed.

Thank you! If you are using thick type virtual disks you need to run a zeroing tool inside of the guest. This will overwrite the data on the array with zeros and the FlashArray will then discard the old data and the zeroes. Using PerfectStorage is a good option for that. At this point VMware does not support UNMAP with thick disks, so zeroing is the only option

Do you know if ESXi 6.5, 6765664 includes this patch already?

Yes anything after 5224529 should

thanks