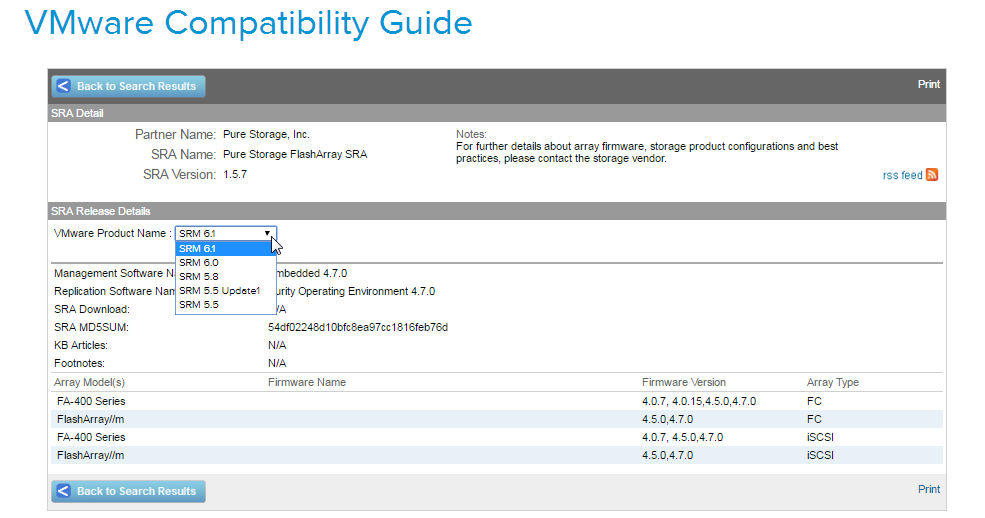

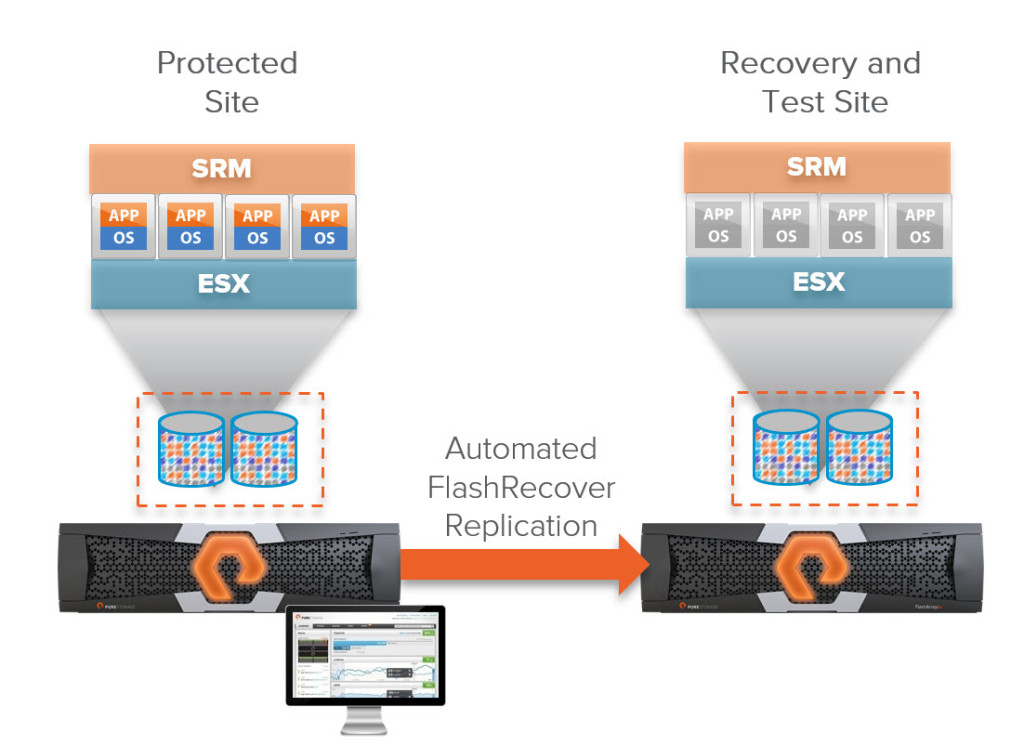

At the time of writing this post we are currently at work on our next release of our Storage Replication Adapter for the FlashArray. In a discussion with a customer who needs the feature that we are adding (what a nice coincidence!) the question came up, “what is the best way to test?”. They want to test the SRA without fouling up their production SRM environment.

So a simple answer is well deploy two new vCenters and a SRM pair. But that requires certain hosts and similar network configuration and authentication, etc. etc. So they wanted to use their existing vCenters but NOT their existing SRM servers.

SRM used to be a fairly rigid tool (for good reason, let’s not break your DR). But in the past few years VMware has really opened it up. Loosened the tight vCenter version to SRM version, shared recovery sites, and multiple SRM pairs per vCenter pair. This is where we come in.

Continue reading “Testing New SRA Release with a 2nd SRM Pair”