Note: This is another guest blog by Kyle Grossmiller. Kyle is a Sr. Solutions Architect at Pure and works with Cody on all things VMware.

VMware Tanzu is a great way for VMware users to manage their virtual machine environments while in parallel coming up to speed with containers all under the same familiar pane of glass. In fact, that’s possibly the biggest value proposition that Tanzu gives us today: extending vSphere and all of its enterprise features and goodness to the realm of K8s in a recognizable context.

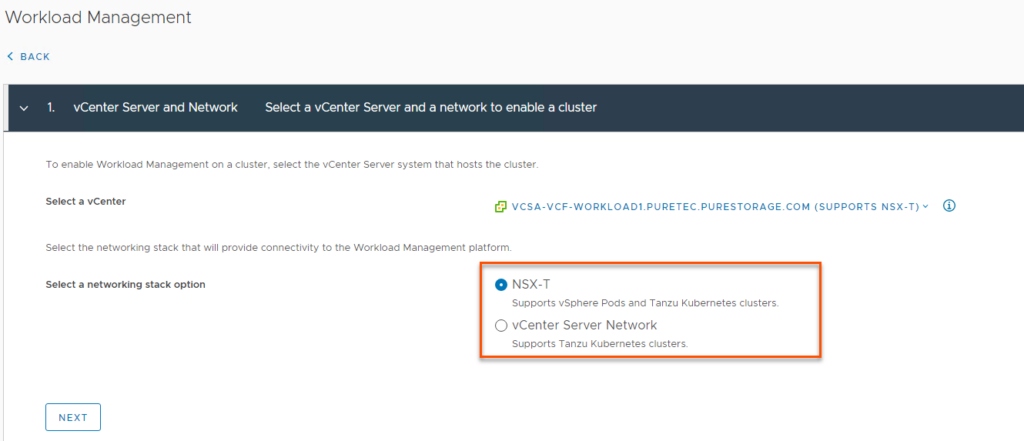

There’s a catch, though. Before one can start to use Tanzu one has to get Tanzu setup. While it’s reasonably straight forward to do so – it is also important to note that there are multiple ways to enable Tanzu and to distinguish the pros/cons of each of them. It’s also key to understand what these underlying components are and how they interact to help troubleshoot any potential problems. This post will focus on two of these methods: vCenter Network Option (HA-Proxy) and NSX-T (VMware Cloud Foundation). Cody covered the 3rd option of directly deploying Tanzu Kubernetes Grid to vSphere in an earlier post that can be found here.

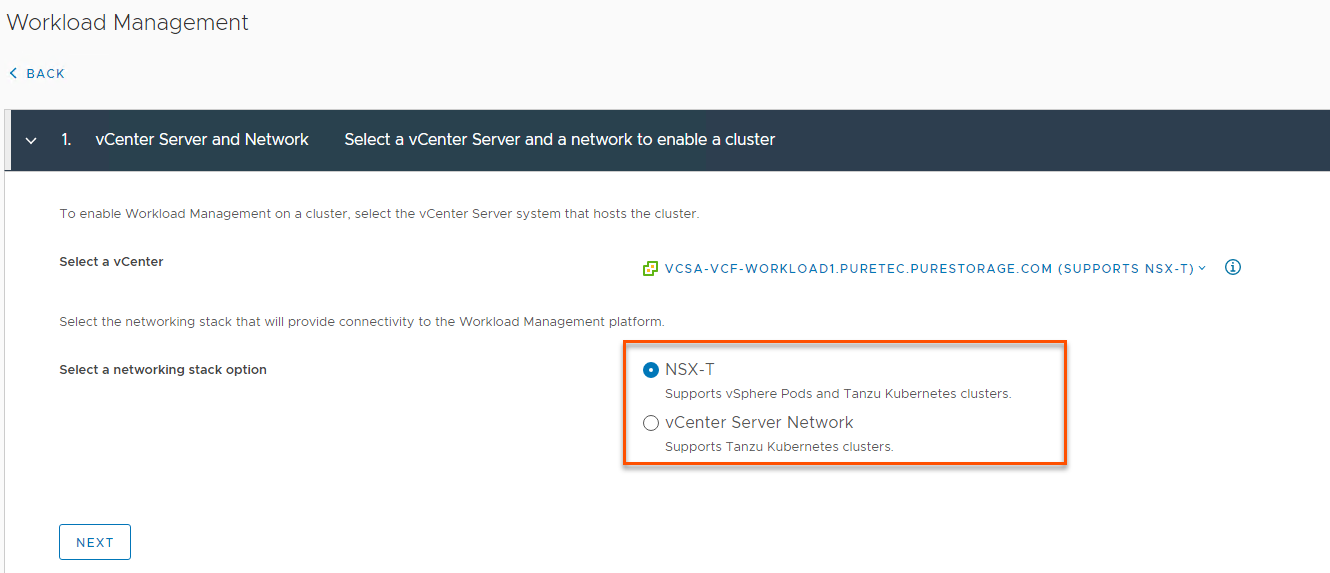

It’s critical to note that you must be at a minimum of vSphere 7 before you can use either of these below methods we will cover. ESXi 6.7U3 and up is supported via the method shown in Cody’s post I linked above. The two Workload Management enablement networking options are built right into the vSphere 7 UI under Workload Management when you add a cluster:

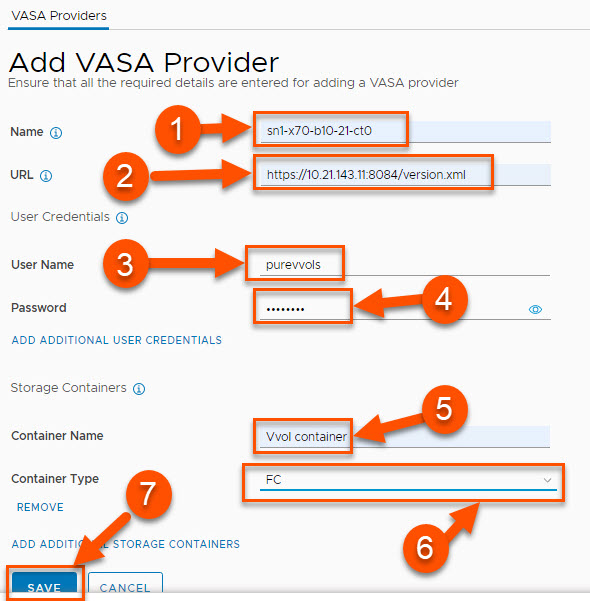

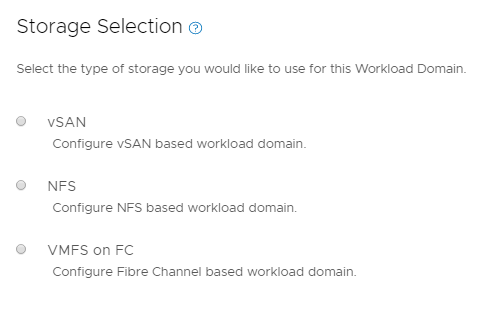

The other important prerequisite is that you will need one or more SPBM (Storage Policy Based Management) policies defined in order to get the Supervisor Cluster up and running. There are a couple of KB articles on our Platform Guide which shows how to do this on the FlashArray and the differences between VMFS and vVols based policies.

So let’s lay out the key components and differences between the vCenter Server Network and NSX-T backed Workload Management options and provide some more information on how to enable each of them…

The vCenter Server Network option allows customers to get Workload Management working in their vSphere environment by deploying a single OVA (known as HA-Proxy) and does not require any other external products like NSX-T or SDDC Manager. This OVA and associated information can be found at this link on GitHub. The main benefit of this option is the relative simplicity of getting Tanzu running since the only items you need to setup are a distributed switch portgroup to handle your Kubernetes ingress/egress ranges and the HA-Proxy OVA itself. The HA-Proxy will act as the load balancer for kubernetes traffic and provide the supervisor cluster API endpoint. The downside is that the HA-Proxy VM represents a single point of failure and larger kubernetes deployments at some point will more than likely overwhelm the CPU/memory resources available to it. This option is best for those who want to look at Tanzu in a POC/exploratory type of setup.

Here’s a quick narrated technical video I created showing how to setup Workload Management with the HA-Proxy OVA:

After Workload Management was enabled, I created another quick demo video showing how to create Namespaces and deploy a Tanzu Kubernetes Guest Cluster:

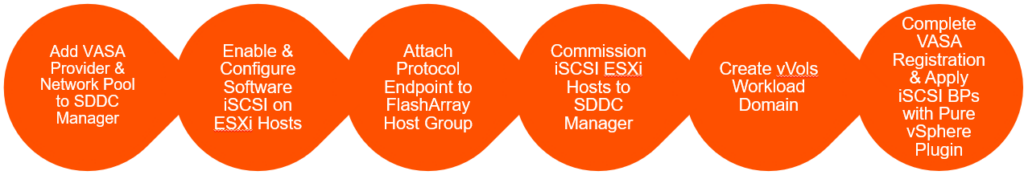

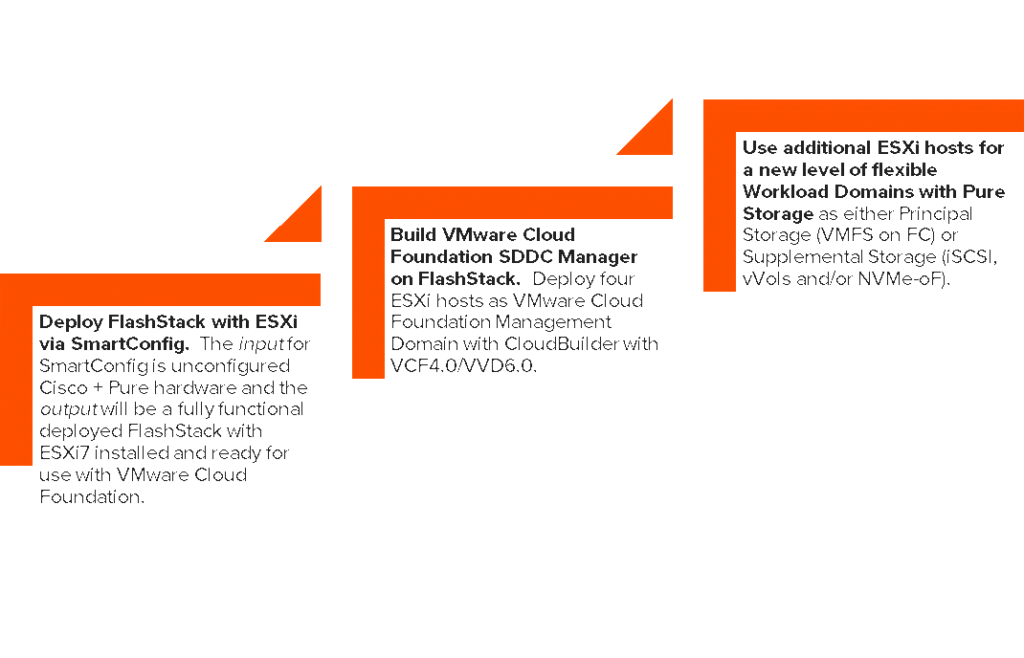

NSX-T based Workload Management is recommended to be backed/managed by a VMware Cloud Foundation deployment. This option gives you all of the enterprise grade features, resilience and lifecycle management that comes embedded with SDDC Manager. The con of this option is there is more setup work and moving pieces involved than the other Tanzu deployment choices and it requires additional licensing once the trial period expires. The key component that needs to be setup for Tanzu in particular is called an NSX-T Edge Cluster. An Edge Cluster is comprised of at least one (but really it should be two for resiliency) Edge VMs which help route and load balance network traffic from your top of rack switch to the underlying kubernetes deployments. The Edge Cluster deployment can be automated to a large extent from within SDDC Manager via a wizard.

In our lab, I went the route of manually deploying a 2 node Edge Cluster within NSX-T as this gives a better ‘under the hood’ view of how everything works together. As most customers likely do not have top of rack switch access to setup BGP, I also decided to use the static routing option within NSX-T. Here’s a video showing how to set this up end-to-end:

With the EdgeCluster built, the next step is to enable Workload Management which is shown in this video:

Hopefully this post has provided a bit of guidance and insight in terms of what Tanzu solution might be right for your environment. We are continuing to investigate and document how to best leverage the FlashArray with kubernetes so please check back here and our platform guide often for updates. Thanks for reading.