At long last! vSphere 7 is available for download.

An overview podcast on whats up:

As time progresses we will have a lot more content out–especially new integrations around the VMware ecosystem. So certainly stay tuned, this is just the start!

NVMe-oF

Ah yes NVMe. This is has been a journey! A good one of course. Let’s follow it:

- June 2015. We made our NVRAM NVMe-based. This was step 1.

- November 2016. VMware added a virtual NVMe adapter for virtual machine. Thus begun VMware’s journey!

- April 2017. We transitioned from SSD-based flash to NVMe-based flash. We were starting to see the looming potential of running into the same bottleneck of spinning disk. The drives were getting bigger, but not faster. So effectively slower (less IOPS per GB). Removing the SAS layer opened us up–especially for performance density. Open up that NAND!

- September 2017. Direct-connected shelves via NVMe-oF! Now expanding our NVMe support outside of our main chassis.

- February 2019. Front-end NVMe-oF with RoCEv2. Extending NVMe to the host!

So all of this begs the question, VMware was working from the VM side down and we were working from the flash medium layer up. When will this journey meet in the middle?! vSphere 7.0 of course! And it is now here.

See a configuration demo below:

See our documentation here:

We are day 0 certified:

vSphere Client Plugin

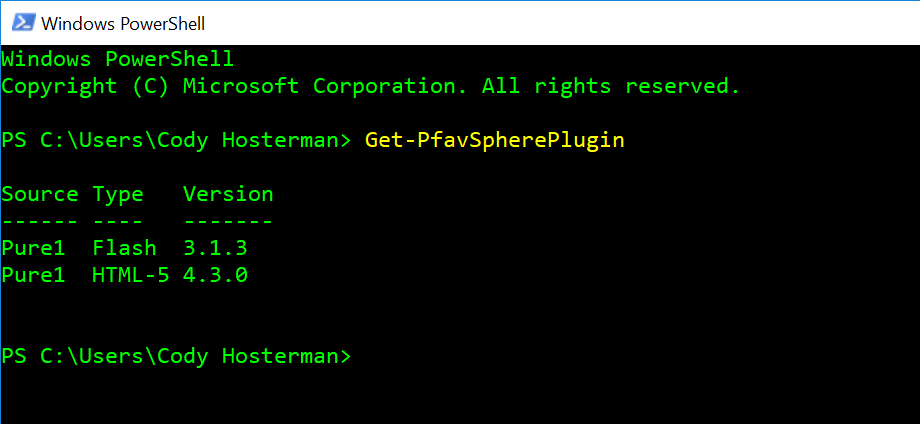

We have also released a new vSphere Client Plugin! Version 4.3.0.

New features include:

- Support of vSphere 7.0

- Support for NVMe-oF datastore identification

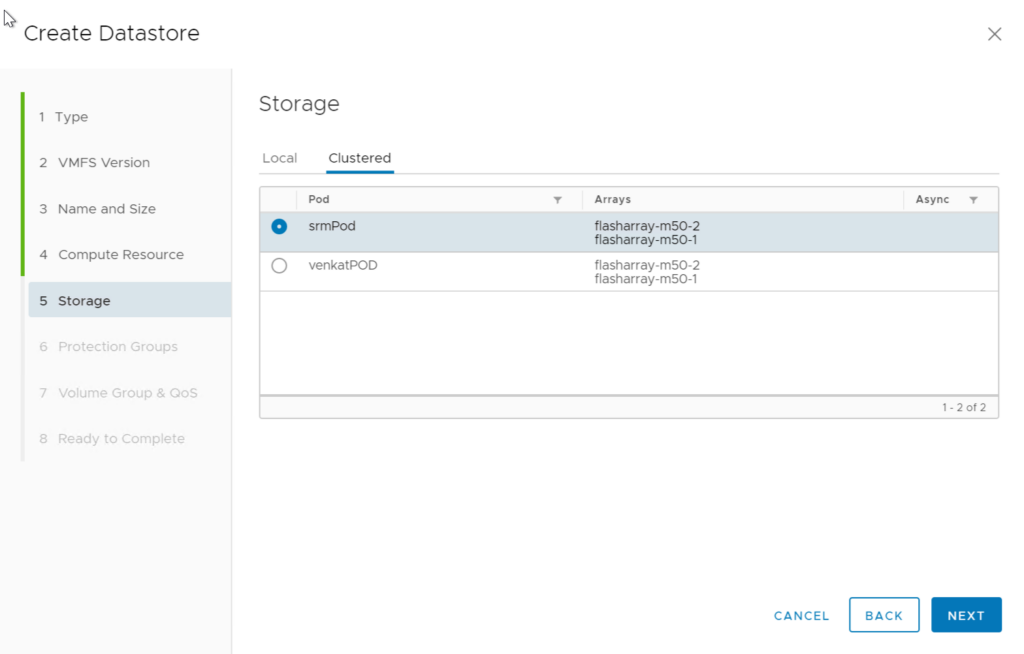

- Full ActiveCluster/Pod Datastore Provisioning

- Support for non-uniform ActiveCluster host clusters

- Volume Group management

- IOPS/Throughput limit assignment on datastores and/or volume groups

Look for a more specific post soon on this.

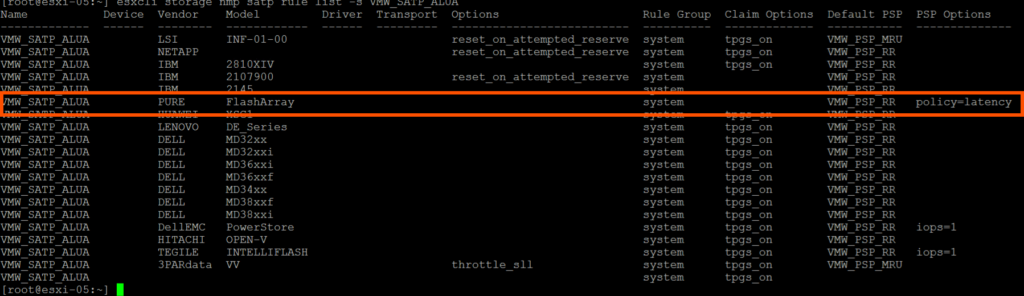

Latency Based Path Selection Policy is now Default!

I have blogged about it in the past:

And after a lot of testing and soaking we have decided to make it our best practice in vSphere 7.0. But like all things I don’t want you make that your problem. So we worked with VMware to make sure it is a default configuration in vSphere 7.0. All Pure Storage FlashArray devices will automatically be claimed by the enhanced PSP.

More on that coming too!

Site Recovery Manager

A ton coming here. VMware officially released SRM support of vVols!

https://blogs.vmware.com/virtualblocks/2020/04/02/vmware-site-recovery-manager-83-now-available/

We are not quite listed on the SRM vVol cert page yet but we will be very soon. Our code release of Purity that we have been working on did not quite time its release with vSphere 7, so once that is done we will post certification (this will be measured in days, not weeks). We have spent A LOT of time enhancing and improving our VASA provider, Purity, and working with VMware to build and support SRM and vVol replication. And quite a few improvements in ESXi/vCenter has come out of the work too. vVols are just getting better and better. We left no stone unturned on this.

We also have a new SRA coming that will land around the same time. So stay tuned!

And More!

We are updating our vRealize integrations which will align with the upcoming release that VMware announced (8.1) as well as more stuff around VMware Cloud Foundations, Cloud Native Storage, and Tanzu! Very exciting times.

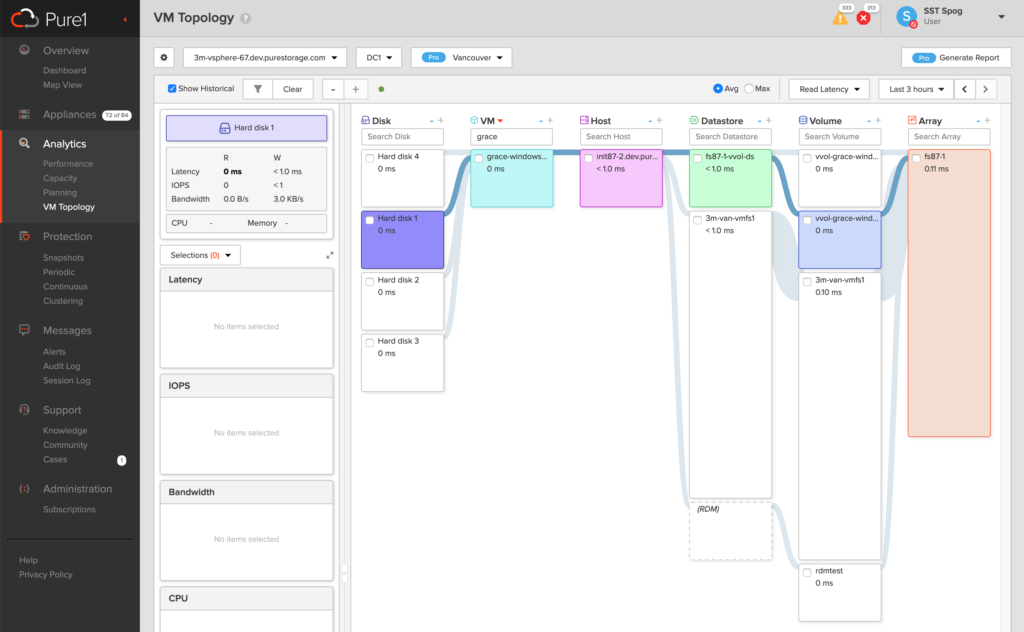

Oh and vVol support in VM Analytics will appear very soon!

What are the requirements for connecting the vSphere plugin to a flash array via the “Connect a single array” tab? We have a stubborn array that will not connect- simply errors out with the message “Error connecting to array”. We have other arrays that connect just fine.

Is there a more verbose log file somewhere that we can troubleshoot this?

Just TCP port 443. The vSphere-UI virgo log can help, but it isn’t super verbose. This is likely something else, could have to do with a bug we just found and fixed in the upcoming 4.3.1 release. I’d recommend opening a support ticket

Hi, We need to create the Pure Flasharray SATP iops=1 in 7.0 Esxi version? Or only with the default policy=latency we have a good performance?

We worked with VMware to change the default to the latency policy because it is superior to IOPS=1. In normal circumstances they will perform the same, in abnormal situations the latency policy has proven to be better. So there is no need to make any changes.