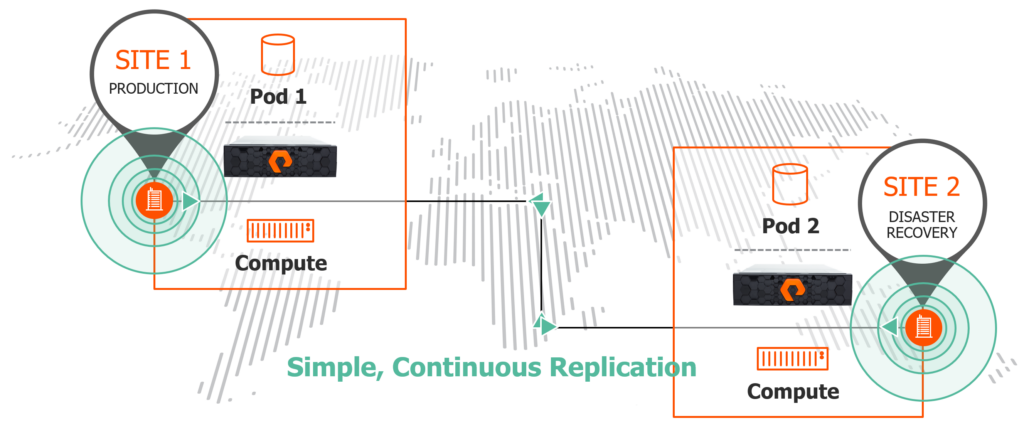

Hey there! This week we announced the upcoming release of our latest operating environment for the FlashArray: Purity 6.0. There are quite a few new features, details of which I will get into in subsequent posts, but I wanted to focus on one related topic for now. Replication. We have had array based replication (in many forms) for years now, in Purity 6 we introduced a new offering called ActiveDR.

ActiveDR at a high level is a near-zero RPO replication solution. When the data gets written, we send it to the second array as fast as we can–there is no waiting for some set interval. This is not a fundamentally new concept. Asynchronous replication has been around for a long time and in fact we already support a version of asynchronous. What is DIFFERENT about ActiveDR, is how much thought has gone into the design to ensure simplicity while taking advantage of how the FlashArray is built. A LOT of thought went into the design–lessons learned from our own replication solutions and features and of course lessons from history around what people have found traditionally painful with asynchronous replication. But importantly–ActiveDR isn’t just about replicating your new writes–but also snapshots, protection schedules, volume configurations, and more. It protects your protection! More on that in the 2nd part.

This will be a three-part series:

- Part I: Replication Fundamentals

- Part II: FlashArray Replication Options

- Part III: VMware Replication Integration

What I want to focus on first is really understanding the basics around replication terminology and methods. What all of this means, how they are different, what are my options?

Let’s define two concepts first:

- RPO. Recovery Point Objective. This defines how much data–at a maximum–you might lose if your source site suddenly vanished and all you had left was the replicated copy. How old is that data? If your last replication synchronization was 5 minutes ago, any data you wrote in the past 5 minutes is, well, lost. This 5 minutes could be because your replication solution only replicates every 5 minutes, or because it simply failed 5 minutes ago. If your replication interval is 5 minutes, your RPO is 10 minutes (wait, what? Read in the section on periodic later). Though if your replication last finished 2 minutes ago and then it fails, your actually Recovery Point is 2 minutes. You surpassed your objective!

- RTO. Recovery Time Objective. This is a bit more complicated. RTO says how long it takes for your workload to be restarted after a failure of the original site. This has less to do with the replication itself, but more about the simplicity of storage failover and provisioning (how long does it take the storage to be ready to be used on the 2nd site). I will call this the data RTO. Then there is the application layer (how long does it take for the service/application to start up that uses that data and start resuming its function). I call this the application RTO.

There are a few more distinctions to make:

- Asynchronous vs Synchronous

- Active/Passive vs Active/Active

- Continuous vs Periodic

Synchronous Replication

Synchronous is most simply defined as replication that offers an RPO of zero. This is your best option to provide true business continuity, i.e. no interruption in service.

To understand that, let’s look at the process for a non-replicated write:

- Latency timer starts.

- A host sends a write I/O to volume on an array.

- The array then stores it permanently.

- The array then responds to the host saying it is stored.

- Latency timer ends.

Synchronous replication has an RPO of zero because the data on the second site is always exactly the same as on the first site. If the I/O never makes it to the 2nd array the I/O is considered to have not been finished and the host will retry (though if the 2nd site is fully lost the source side might continue serving I/O but stop trying to replicate–this is a design decision of the replication). If you lose the primary array, the second array can present the volume with the data exactly where there first one left off.

When synchronous replication is enabled on that volume, the array extends what “stores it permanently” means. In this case:

- Latency timer starts.

- A host sends a write I/O to a volume on the array.

- The array then stores it.

- The array then forwards the write to the second array.

- The second array stores it.

- The second array responds back to the first array confirming the preservation of the data.

- The first array then tells the host that the write is completed.

- Latency timer ends.

Notably, the downside to this is that the host have to wait for the first array to store it, but also the data to go to the second array, be stored, and be acknowledged (this is a feature, not a bug). This manifests as higher latency. Therefore the usefulness of synchronous replication is constrained by the distance between the two arrays–the further the second array is, the higher the introduced latency.

Generally 200 miles or so is the preferred limit for synchronous replication–which amounts to ~4 ms round trip. Anything further starts to negatively affect application/end user experience. Though it is certainly possible. Pure supports up to 11 ms RTT for our synchronous replication for example.

Solving for this is where asynchronous comes in.

Asynchronous Replication

Asynchronous replication is the logical next step to synchronous. 200 miles may not be enough to protect you from a larger outage (power grid failure, hurricane/typhoon, etc). Maybe there is nothing 200 miles away or even 500. Maybe your datacenter is in a place that is so remote that the next closest one is 1,000s of miles away.

The more likely issue is simply that the latency introduced by the extra distance (whatever that might be) for writes is just not acceptable. Often the perfection that is zero RPO is not worth the trade for the performance hit.

Hence asynchronous. Asynchronous replication provides disaster recovery protection as follows:

- Latency timer starts.

- A host sends a write I/O to a volume on an array.

- The array stores it.

- The array responds to the host that the I/O is safe.

- Latency timer ends.

- Then the array at some point sends the write I/O (and any others) to the second array.

Let’s use this scenario:

Two arrays are synchronized at 10 AM. Then an application writes 1 GB of new data in a minute. The array replicates every 5 minutes. So the RPO (the objective) is 5 minutes. Suddenly at 10:01 the first array is destroyed by a rodent of unusual size–the array never got to send the 1 GB data to the 2nd site. That data is lost. The recovered data on the second array is 1 minute old. That 1 GB of changes is gone because it happened in that 5 minute interval.

So like all things in life there are tradeoffs.

Active/Passive

So that’s async vs sync. As with all things in tech, there are shades of grey and many levels of it. We do not speak in absolutes.

There are different methods to present replicated storage. Since the storage is on two arrays, can it not be used on both arrays at the same time? Usually not. The most commonly deployed method for replication is called active/passive. Let’s define “active” as the volume can be written to and read from. Let’s define “passive” as the data is there, but it cannot be written to–aka “dormant” (sometimes though it can be read from–this is a common backup use case).

So active/passive means that a given data volume is actively available on one array and protected to, but dormant (passive), on the second array.

If the active side goes down (or is made passive administratively) then the passive site volume can be used, a.k.a. made active.

Active/passive can be synchronous replication or asynchronous replication.

In a replication environment, two arrays are spread across two failure domains. One in each domain. A failure domain can be a datacenter, a city, or a region of some sort. Basically a place that could be entirely taken down by some small or even catastrophic failure. Additionally, there are usually hosts in both failure domains. Hosts in one site using the data that is active on the array local to their failure domain. And accordingly hosts in the other failure domain waiting to use the data that is passively stored on the array in that same failure domain.

For the “recovery” hosts to start using the data in the second site, the passive storage must be made active and presented to those hosts. This is called a failover. A failover takes time which means an increased RTO.

Automation of this failover process can certainly help reduce the RTO.

Active/Active

Active/active replication means that the same volume can be used on both arrays at the same time. Hosts in either datacenter can access the volume through the array that is local to them.

This volume is generally either hosting:

- A simultaneous access shared file system (like VMFS) where multiple hosts can use the same file system at once, but just use different parts of it.

- A single-host access non-shared file system but with a clustered application (like Microsoft Failover Clustering) that can coordinate control back and forth between multiple hosts.

Any write sent to a volume on one of the two arrays is also then sent to the other array. The difference here is that either array can accept writes at the same time. Any read just goes to the local array (no need to read from both arrays–it is the same data). Therefore Active/Active replication can also be referred to as bi-directional replication.

A common question here that comes up is what happens if two hosts write to the same logical block address through different arrays at the same time? Well the same thing if two hosts write to the same block on a volume that is on only one array. Corruption. This access control is up to the file system to deal with. If your file system allows that–it is a poorly written file system and you should demand a refund.

The key here is that Active/Active is ONLY for synchronous replication. Asynchronous replication, by definition, means that the data is not guaranteed to be the same on both sites at a given point in time–so allowing simultaneous access is…well… not a good idea. It would be like playing chess but not being able to see the other players’ last few moves.

Like I mentioned before, the two arrays are spread across two failure domains. One in each domain. Same with the hosts–there is compute in both places. But this time the hosts in each side can be actively using the same volume simultaneously.

In what is referred to as a non-uniform configuration, the hosts only have storage fabric access to the array that is local to them.

Since this is synchronous replication, the RPO is zero. The storage can be always in use and ready in both sites at the same time. There is no need to do anything in the event of failure–the storage is already ready on the other side. The data RTO (the time to prepare/present the storage) is also zero. But it is important to note that the application RTO is likely not zero. If an application is running on one side and the site goes away, the application needs to be restarted on the other site. Whether that be a VM restarting on another host, or a clustering software failing the application and volume control over to the remote node.

In what is called a uniform configuration the hosts in both sites have storage fabric access to both arrays. This allows an array to entirely fail but the hosts don’t have any loss of access.

The RPO here is also zero.

The data RTO is also zero. The application RTO might be zero as well. If just the array fails but the hosts keep running, those hosts will just use the surviving paths to the remote array. Active/Active storage in a uniform configuration gives you a fighting change at zero RPO and true end-to-end zero RTO.

Since the same volume is available in two places at once, coordination is required to make sure the data is the same. Since they are physically separated there is a chance for split brain (both arrays are available, but to hosts but they cannot talk to one another and replicate changes to each other). So if communication stops between the arrays, one array needs to stop serving data. To assist with this mediators (sometimes called witnesses) are introduced to help break ties in decisions on what array should continue to host that volume.

Continuous Replication

So the last distinction is continuous vs periodic.

Continuous replication means that the data is replicated over to the second array as quickly as possible. Might happen immediately, might have to wait. Depends on how many resources are available to send the data changes, how busy the link is, and/or how busy the target is. The main point is that the array is not waiting for some specific amount of time or data before it replicates. It does what it can, when it can, as quickly as it can.

Continuous replication has a non-zero RPO. Though since the array is using whatever resources it can to send the data over, the RPO is usually near zero. Which is often measured in seconds. Though because there is no real specific interval on this, the RPO can be unpredictable. But with good sizing and adequately available WAN bandwidth you can be fairly accurate in what the expected RPO will be.

There is also a non-zero RTO. The data might be behind, and it needs to be failed over and presented to recover the applications. So simplicity and/or orchestration are your friends to reduce the RTO here too.

Continuous replication can arguably be synchronous or asynchronous replication. Though synchronous replication is by definition always continuous. So a more important distinction with synchronous replication is whether it is active/active vs active/passive. Asynchronous replication is not always continuous–it can also be what is called periodic.

Periodic Replication

Asynchronous replication can also be periodic. This means that some kind of event must occur for data to be sent over to the second site. This event might be a certain amount of time has passed, or a certain amount of changed data has built up, or the target decides it is ready to receive. Periodic replication on arrays can also look fundamentally different than continuous.

One option for periodic replication is for an array to create a volume snapshot and send that snapshot over according to the schedule. This means this is not volume to volume replication (volume A on array A replicating its data to volume B on array B). Instead, a volume exists on the source side and one or more replicated snapshots of that volume exist on the target array–each representing some point-in-time of that volume from the past.

Another option is just wait a certain amount of time and then send the differences over in a chunk. This would be volume A to volume B. The data on volume B keeps getting overwritten by the changes from volume A, on some specified schedule.

So what is the RPO? Well most commonly the replication interval is a time like every 5 minutes for example. Is 5 minutes the RPO? No. It is actually just under 10 minutes.

Let’s use this example:

- 10:00 a snapshot is created.

- That snapshot is replicated to the target array.

- So the target array now has a copy from 10:00.

- 10:05 comes around. Another snapshot is created.

- That snapshot starts being sent to the target array.

- The replication link fails just before it is complete at like 10:09:55.

What is the last good point-in-time on the target array? 10:00. The snapshot was incomplete so the point-in-time is not valid. So in this case the RPO is basically double the replication interval.

The RTO is exactly the same as with continuous. How long to failover and present the target volume and how long to restart the application?

Async or Sync

So should I choose async or sync? Well it depends on your business requirements mostly. Do you need zero RPO? If you do. Synchronous is your answer. Do you not need zero RPO? Do you not have the bandwidth to support it? Do you have an unstable WAN connection? Can you not take the performance hit from a long synchronous distance? Maybe go for async.

Yes, why not both? Well this is a common choice too! Synchronous to a close site, out of the range of a danger you feel you might most likely hit, like a datacenter power loss, to another datacenter a few miles away. The latency hit would be minimal. Then from that site asynchronously replicate to a much more distant third site. So if you do lose your source array, you still have a zero RPO copy which can then be synchronized to the third site. The downsides to this approach are:

- Cost. You need three arrays, three sites potentially. Though is the threat of data loss more costly? Maybe. Maybe not.

- Higher RTO–if you need to manage a sync from site B to site C you might have to do some more work–though a lot of that is automated these days.

- Complexity–more moving parts potentially. But the severity of this problem is somewhat implementation dependent.

But a combo gives a solid balance of low RPO while maintaining performance. This is really about what your business demands.

Active/Active or Active Passive

So A/A or A/P? Well this is mostly a RTO question. Do you want the lowest possible RTO? This is the one solution that can provide you zero RTO in some failure scenarios. Also since the storage is the same in both places, it often needs the least amount of custom automation to fail over applications. A/P is replicating data from one volume to a distinct remote volume–a volume with a different serial/UUID. Therefore a host will see it differently once failed over, which means resignaturing, or some type of scripting to make sure the application or OS knows this is the same data. A/A presents the same volume, so the app and/or OS doesn’t need any changes, because as far as it knows nothing has changed. So there is a simplicity argument for A/A as well. A/A has a much stronger ability to provider Automatic protection, vs Automated with A/P.

Continuous or Periodic

This is often an RPO question. Continuous usually offers the best RPO as it is not restricted by some lower limit set by a user. If the replication can keep up with the write rate you can have near zero RPO, for periodic even if it can go faster, it probably won’t.

There can be some potential RTO benefits too. If there is a high change rate and you need to do some kind of planned failover, periodic might take longer to synchronize remaining changes before being ready–increasing the data RTO.

Lastly, continuous not only replicates your data, but problems with your data. If something was accidentally deleted on the volume, that deletion gets replicated. If something gets corrupted, that corruption gets replicated. If something gets ransomwared (encrypted), that target volume is encrypted too. This can be resolved with periodic snapshots or replication journaling, but it is an extra consideration regardless. Periodic replication is less susceptible to this because usually it keeps more than one snapshot on the target side. Usually.

Okay phew. The next part of this series is to map all of this back to what the replication offerings are on the FlashArray.

Good Stuff Cody!

Look forward to the next article, especially ActiveDR. What’s the latency/bandwidths/WAN requirement? Does it support SRM? Can I do my DR test at DR site while production site is still running? What’s the typical RPO for ActiveDR?

The SRA that supports ActiveDR is in RC status right now. Which means we need to finish QA then certification which takes a month or so. You can certainly test while production is online (I’ll add detail in that in a future post). Typical RPO is sub 30 seconds. Though depends on WAN bandwidth and write workload. But normal/common scenarios should be sub 30

Thanks for sharing good stuff with us. I have a quick question Cody regarding Active/Active In Pure. Can i have both POD and NON-POD Volumes in the same Array? We have A/A but I want to create Volumes and Use them outside the POD. Is this something we can do, or not recommended to have in an A/A setup. Thanks again

Yes definitely. We support volumes in an out of pods on the same array–all of our ActiveCluster customers have volumes that are in pods and not in pods at the same time

Hi Cody,

Thanks for this very nice explanation.

I have one question regarding Active/Active Non-Uniform configuration.In this configuration, Can I have hosts from different sites in same VMware cluster, so that if one site is down then VMs are automatically restarted to hosts in other site by VMware HA that does not require any manual intervention and after restart VMs continue to run using local volume on recovery site ?

Yes definitely! This is the most common deployment of ActiveCluster in fact–this is a situation called vMSC–we have a guide on it here:

https://support.purestorage.com/Solutions/VMware_Platform_Guide/User_Guides_for_VMware_Solutions/ActiveCluster_with_VMware_User_Guide/Web_Guide%3A_Implementing_vSphere_Metro_Storage_Cluster_With_ActiveCluster

Cody, What is the form of the replication being used between the flash arrays. I while back I had a discussion with a tech and he said the replication was snapshots. I clarified that I was speaking about flash block storage replication, he repeated snapshots. is that the same and was that true?