Note: This is another guest blog by Kyle Grossmiller. Kyle is a Sr. Solutions Architect at Pure and works with Cody on all things VMware.

VMware Tanzu is a game-changing piece of technology for numerous reasons, but probably the most transformational piece of it is also the most apparent – it provides the capability for the vCenter admin to give resources for both consumers of traditional virtual machines as well as Kubernetes/DevOps users from the same set of compute hosts and storage. This consolidation means that the vCenter admin can more easily see what is being allocated where, as well as gaining insight into what application(s) might be candidates to make the move into a container-based environment from a virtual machine.

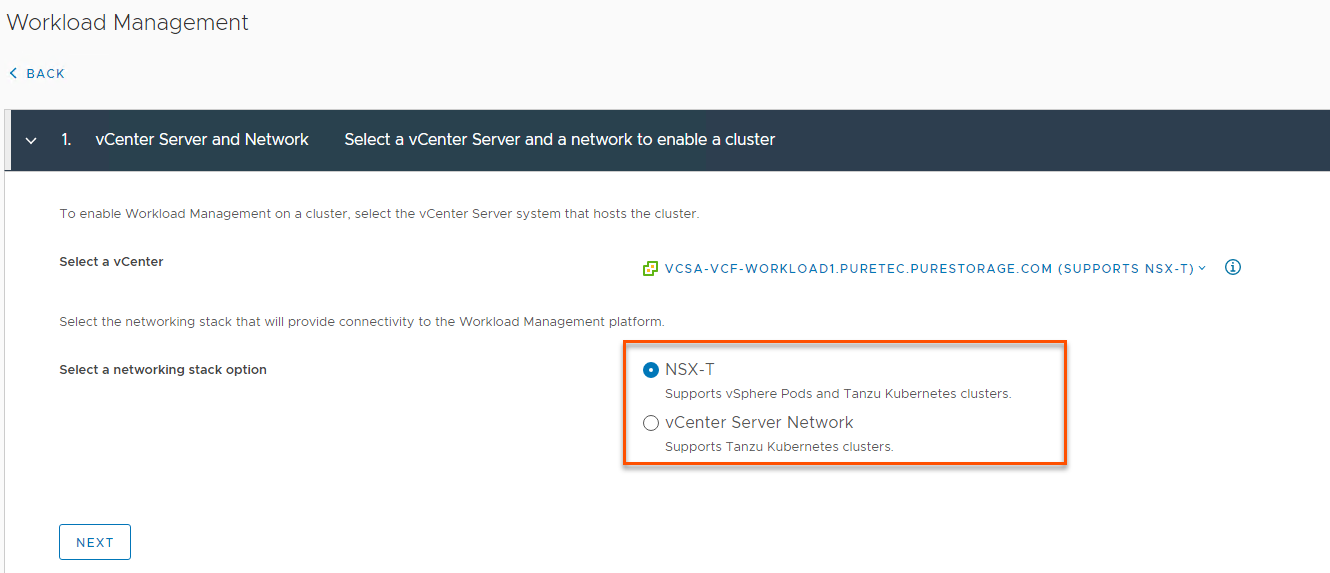

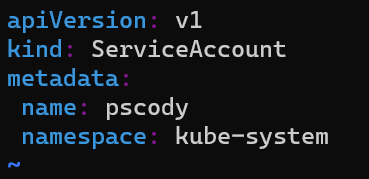

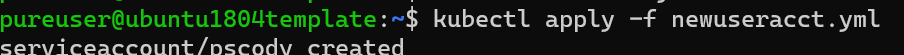

A Tanzu deployment is comprised of quite a few moving pieces and a central piece of this is durable storage made possible by persistent volumes. While container nodes and pods are ephemeral by nature (which is one of their major advantages), the data that they consume, produce and manipulate must be performant, portable and often, saved. So, there is obviously a different set of things we care about for persistent data vs the Kubernetes nodes that Tanzu runs in unison with here. For the remainder of this post we will show a couple of quick and easy ways you can change your persistent volumes to suit your application needs. There’s a bit of work and some choices to be made around getting a Tanzu environment up and running in vSphere, and I’d encourage you to check out the VMware Tanzu User Guide on our Pure Storage support site or Cody’s blog series to get some additional information.

With that being said, when a persistent volume is created via either dynamic or static provisioning, one of the first things the application developer needs to decide is what will happen to that volume and data when the application that uses it itself is no longer needed. The default behavior for an SPBM policy/storageclass assigned to a vSphere Namespace is to delete it, but through a simple kubectl patch command line, the persistent volume can be saved for future usage.

To make this change, first get the persistent volume name that you want to Retain/save:

$ kubectl get pv NAME CAPACITY RECLAIM POLICY STATUS pvc-f37c39fd 5Gi Delete Bound

Next, apply this kubectl command line to it to switch the reclaim policy from Delete to Retain:

$ kubectl patch pv (PV_Name) -p '{"spec":{"persistentVolumeReclaimPolicy":"Retain"}}'

So for our PV example:

$ kubectl patch pv pvc-f37c39fd -p '{"spec":{"persistentVolumeReclaimPolicy":"Retain"}}'

When we run the kubectl get pv command again, we can see it is set to Retain, so we are all set:

$ kubectl get pv NAME CAPACITY RECLAIM POLICY STATUS pvc-f37c39fd 5Gi Retain Bound

If there is anything close to a certainty in the storage world – it is that the longer a volume exists, the more full of data it will become. This becomes even more of a certainty if a persistent volume is retained and reused across multiple application instances for increasing amounts of time. In the vSphere and Supervisor cluster 7.0U2 release VMware has introduced the capability for Online Volume Expansion. What this means is that while in previous versions users had to unbind their persistent volume claim from a pod or node prior to resizing it (otherwise known as offline volume expansion) – now they are able to accomplish that same operation without that step . This is a huge advantage as the offline expansion required that the volume be effectively be taken out of service when additional space was added to it, which could lead to application downtime. With the online volume expansion enhancement that annoyance goes away completely.

Online volume expansion operation is really simple to do. This time we find the persistent volume claim (which is basically the glue between the persistent volume and the application) that we need to expand:

$ kubectl get pvcNAME STATUS VOLUME CAPACITY pvc-vvols-mysql Bound pvc-f37c39fd 5Gi

Now we run the following patch command against the PVC name we found above so that it knows to request additional storage for the persistent volume that it is bound to. In this case, we will ask to expand from 5Gi to 6Gi:

$ kubectl patch pvc pvc-vvols-mysql -p '{"spec": {"resources": {"requests":{"storage": "6Gi"}}}}'After waiting for a few moments for the expansion to complete, we look at the pvc in order to confirm we have the additional space that we asked for and we can see it has been added:

$ kubectl get pvc

NAME STATUS VOLUME CAPACITY

pvc-vvols-mysql Bound pvc-f37c39fd 6Gi Taking a closer look at the PVC via the describe command shows that it indeed increased the PV size while it remained mounted to the mysql-deployment node under the events section:

$ kubectl describe pvc

Name: pvc-vvols-mysql

Namespace: default

StorageClass: cns-vvols

Status: Bound

Volume: pvc-f37c39fd-dbe9-4f27-abe8-bca85bf9e87c

Labels: <none>

Annotations: pv.kubernetes.io/bind-completed: yes

pv.kubernetes.io/bound-by-controller: yes

volume.beta.kubernetes.io/storage-provisioner: csi.vsphere.vmware.com

volumehealth.storage.kubernetes.io/health: accessible

Finalizers: [kubernetes.io/pvc-protection]

Capacity: 6Gi

Access Modes: RWO

VolumeMode: Filesystem

Mounted By: mysql-deployment-5d8574cb78-xhhq5

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning ExternalExpanding 52s volume_expand Ignoring the PVC: didn't find a plugin capable of expanding the volume; waiting for an external controller to process this PVC.

Normal Resizing 52s external-resizer csi.vsphere.vmware.com External resizer is resizing volume pvc-f37c39fd-dbe9-4f27-abe8-bca85bf9e87c

Normal FileSystemResizeRequired 51s external-resizer csi.vsphere.vmware.com Require file system resize of volume on node

Normal FileSystemResizeSuccessful 40s kubelet, tkc-120-workers-mbws2-68d7869b97-sdkgh MountVolume.NodeExpandVolume succeeded for volume "pvc-f37c39fd-dbe9-4f27-abe8-bca85bf9e87c"

Those are just a couple of the ways we can update our persistent volumes to do what we need them to do within a Tanzu deployment, and we have really just scratched the surface with these few examples. To see how to do more advanced operations like migrating a persistent volume to a different Tanzu Kubernetes Cluster, please head over to our new Tanzu User Guide. Of course, it also is very important to mention that Portworx combined with Tanzu gives us even more features and functionalities like RBAC, automated backup and recovery and a whole lot more. Getting deeper into how Portworx interoperates with Tanzu is what I’m working on next so please stay tuned for some more cool stuff.