Previous series on Tanzu setup:

- Tanzu Kubernetes 1.2 Part 1: Deploying a Tanzu Kubernetes Management Cluster

- Tanzu Kubernetes 1.2 Part 2: Deploying a Tanzu Kubernetes Guest Cluster

- Tanzu Kubernetes 1.2 Part 3: Authenticating Tanzu Kubernetes Guest Clusters with Kubectl

The next step here is storage. I want to configure an ability to provision persistent storage in Tanzu Kubernetes. Storage is generally managed and configured through a specification called the Container Storage Interface (CSI). CSI is a specification created to provide a consistent experience in an orchestrated container environment for storage provisioning and management. There are a ton of different storage types (SAN, NAS, DAS, SDS, Cloud, etc. etc.) from 100x that in vendors. Management and interaction with all of them is different. Many people deploying and managing containers are not experts in any of these, and do not have the time nor the interest in learning them. And if you change storage vendors do you want to have to change your entire practice in k8s for it? Probably not.

So CSI takes some proprietary storage layer and provides an API mapping:

https://github.com/container-storage-interface/spec/blob/master/spec.md

Vendors can take that and build a CSI driver that manages their storage but provides a consistent experience above it.

At Pure Storage we have our own CSI driver for instance, called Pure Service Orchestrator. Which I will get to in a later series. For now, lets get into VMware’s CSI driver. VMware’s CSI driver is part of a whole offering called Cloud Native Storage.

https://blogs.vmware.com/virtualblocks/2019/08/14/introducing-cloud-native-storage-for-vsphere/

This has two parts, the CSI driver which gets installed in the k8s nodes, and the CNS control plane within vSphere itself that does the selecting and provisioning of storage. This requires vSphere 6.7 U3 or later. A benefit of using TKG is that the various CNS components come pre-installed.

The first step is to authenticate–or rather would normally be. This is also a benefit of using Tanzu–the authentication you configured does this automatically. Normally you have to configure a “secret” and apply it with kubectl:

https://vsphere-csi-driver.sigs.k8s.io/driver-deployment/installation.html#create_k8s_secret

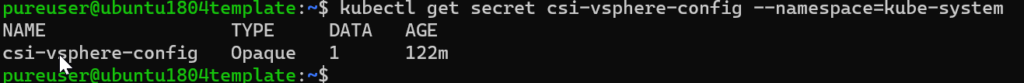

Using kubectl get secret you can see the csi-vsphere-config secret.

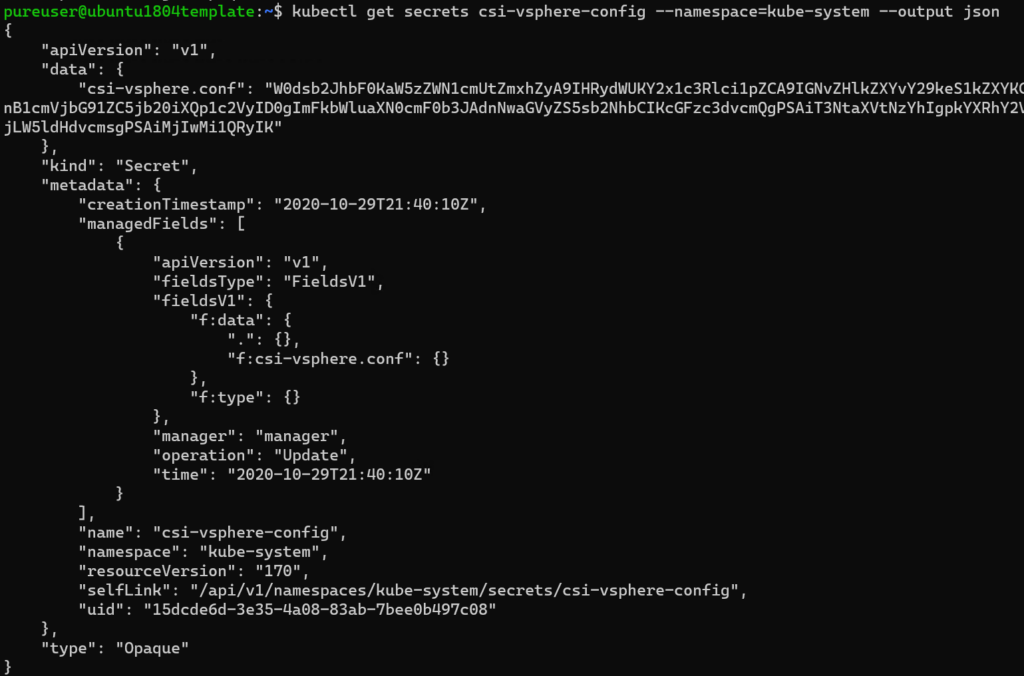

Further detail if you output via JSON or YAML:

There is a concept referred to as a storage class. A storage class is essentially a type of certain configuration of storage. Gold, silver, bronze. Replicated. Flash. Really whatever. What one class describes really depends on the underlying storage. Though there are some standardized features described in the spec.

https://kubernetes.io/docs/concepts/storage/storage-classes/

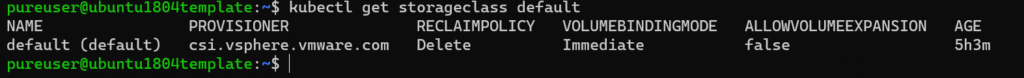

With the VMware CSI driver, storage classes map back to storage policies. There is a default storage class:

But creating your own to provide some guardrails on where this storage goes is good.

There are a few ways to do this–I will focus on the methods for external storage. So this will be either tags and/or vVols.

Tags

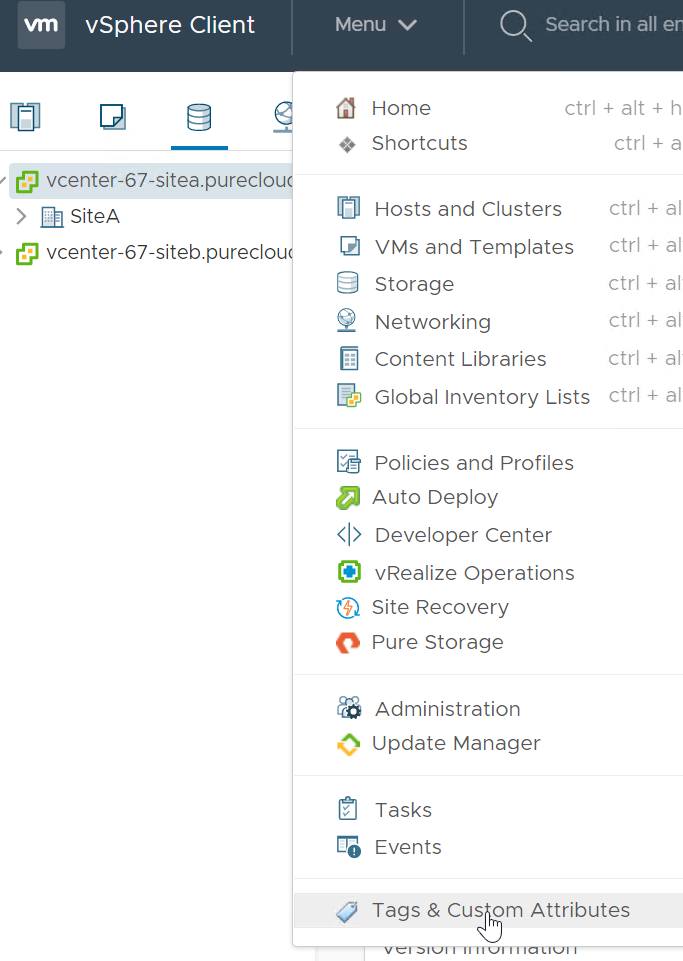

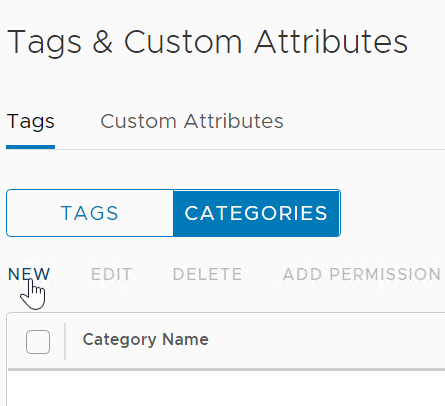

So back to the vSphere Client. Click on Home then Tags & Custom Attributes

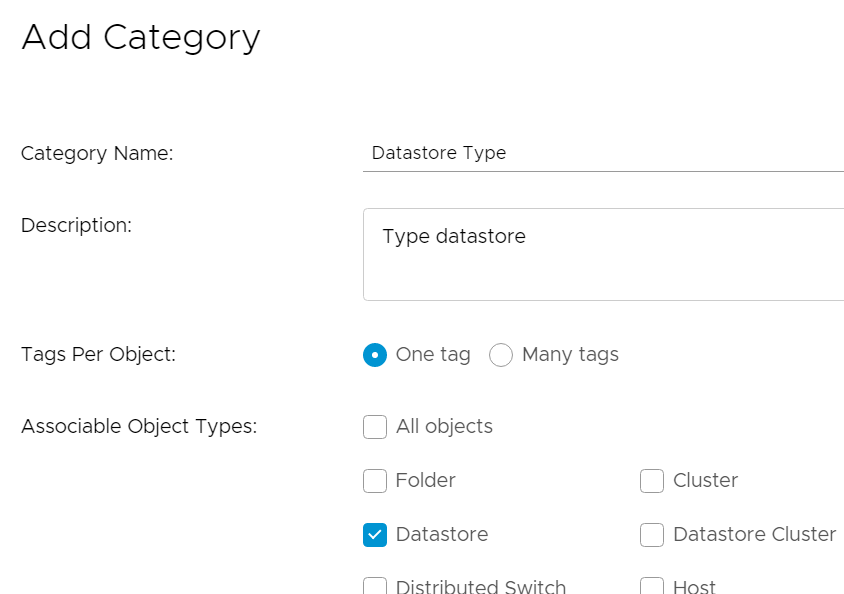

Click Categories then New

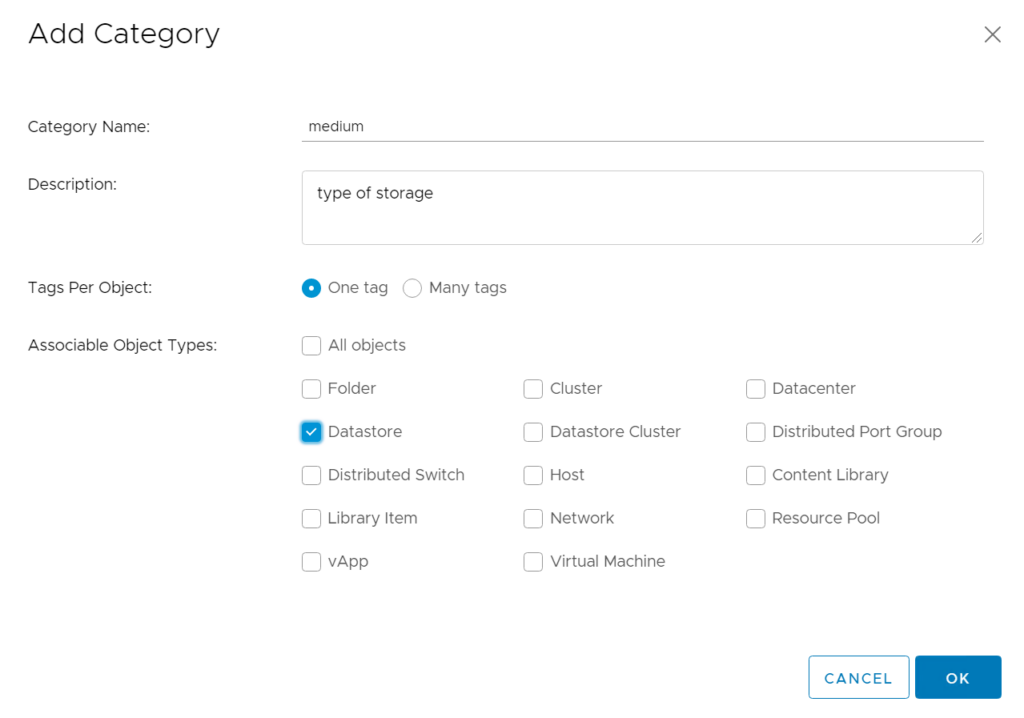

I am going to create a category that is called “medium” which I will then tag with the type of storage medium under the datastore.

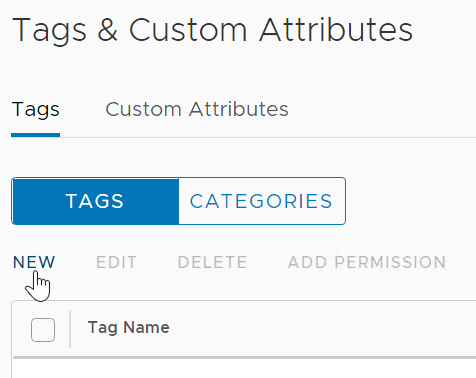

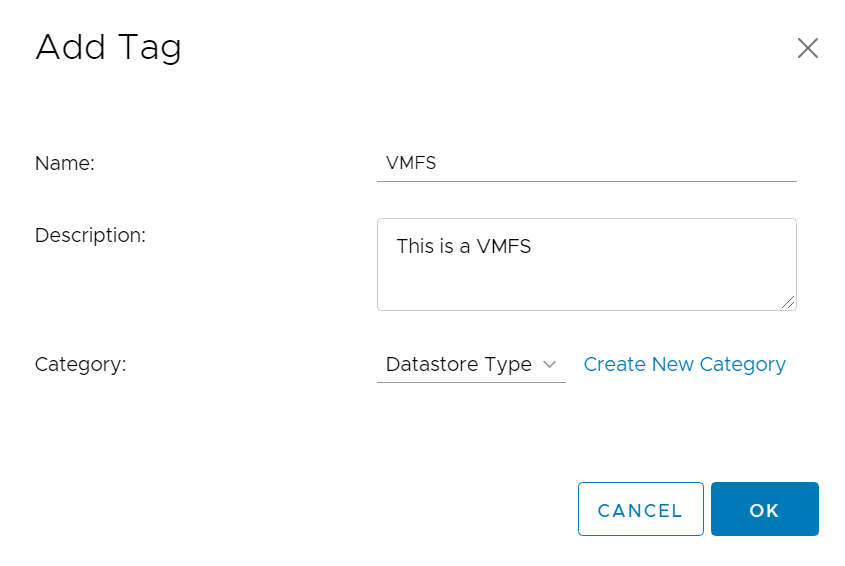

Click OK then click on Tags and click New.

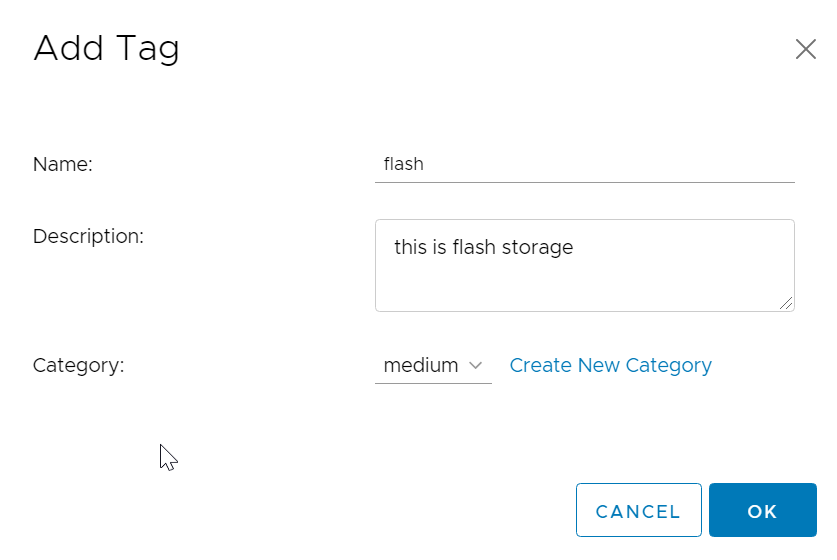

Then enter in a name for the tag and choose the category you created.

I am going to create a second category and tag that is called Datastore Type with a tag named VMFS.

Then the tag:

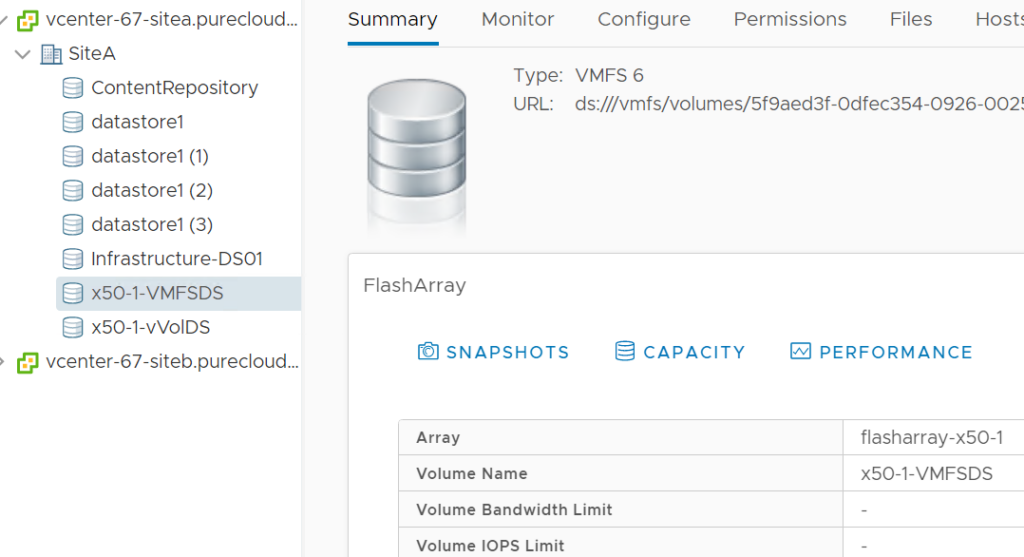

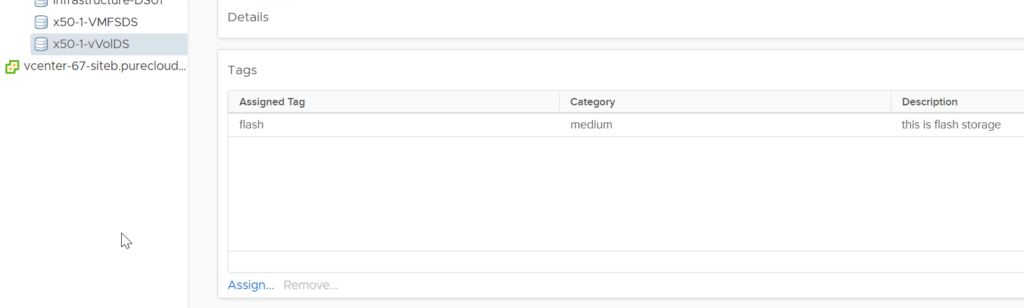

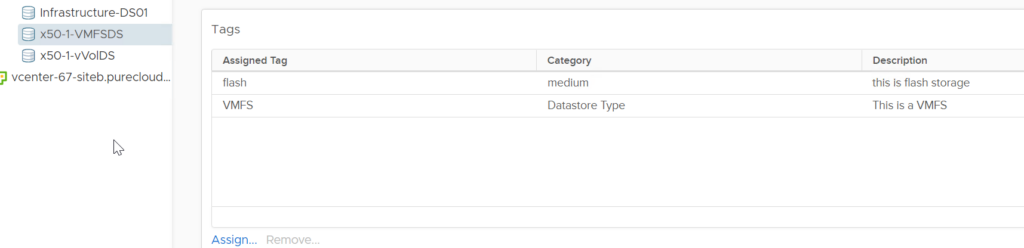

I have two datastores I want to use, a VMFS datastore called x50-1-VMFSDS and a vVol datastore called x50-1-vVolDS.

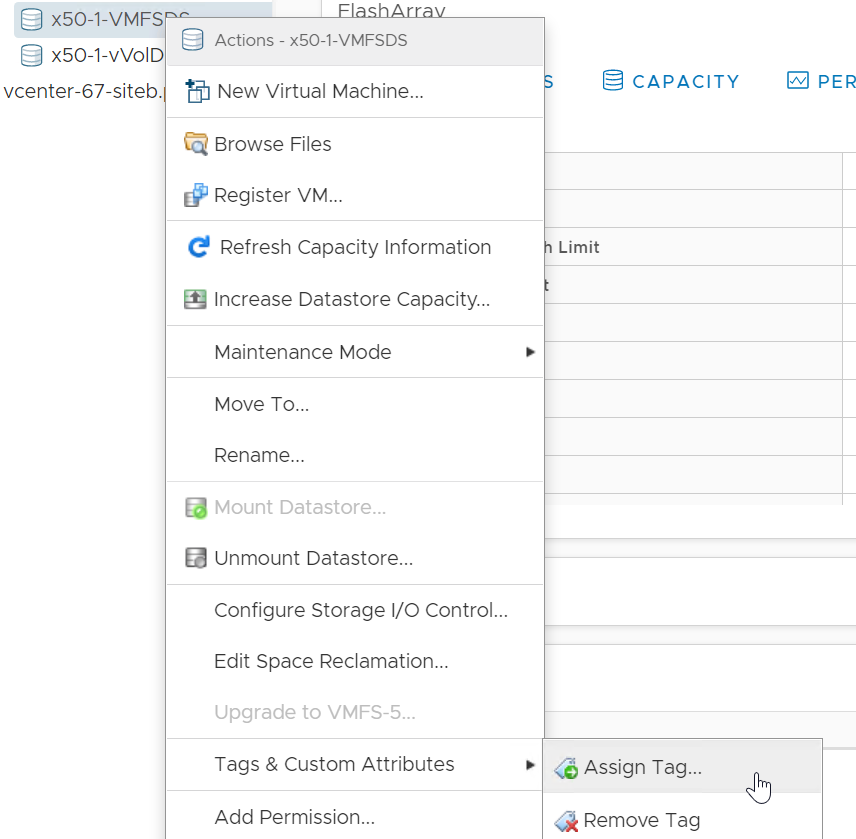

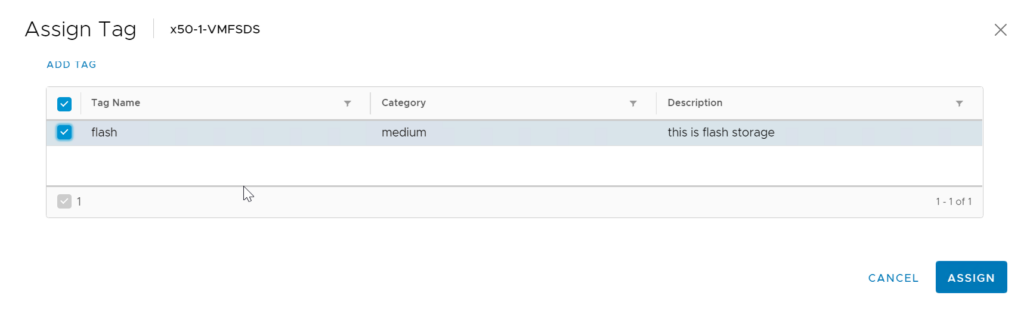

To tag, right-click each datastore, choose Tags & Custom Attributes then Assign Tag.

Choose your tag and click Assign.

I will repeat for the vVol one too. So both are now tagged with flash:

But the VMFS is also tagged with VMFS:

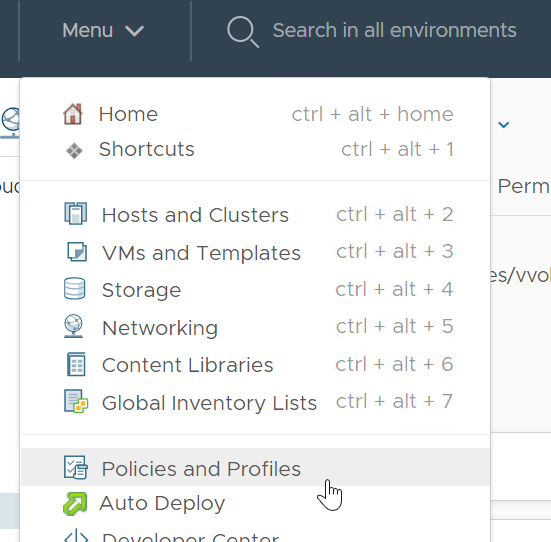

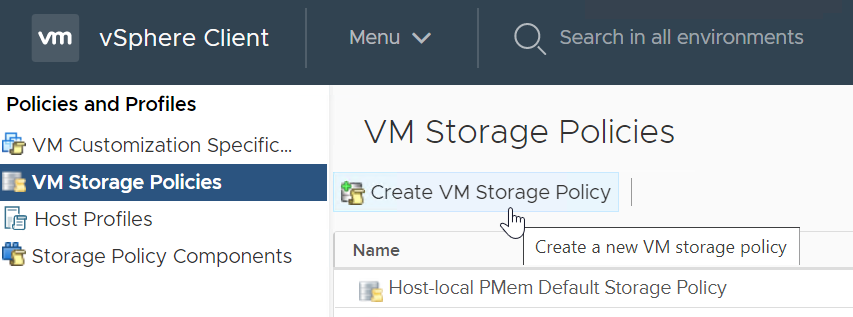

Now click on the Home drop down and go to Policies and Profiles.

Now click on Create VM Storage Policy.

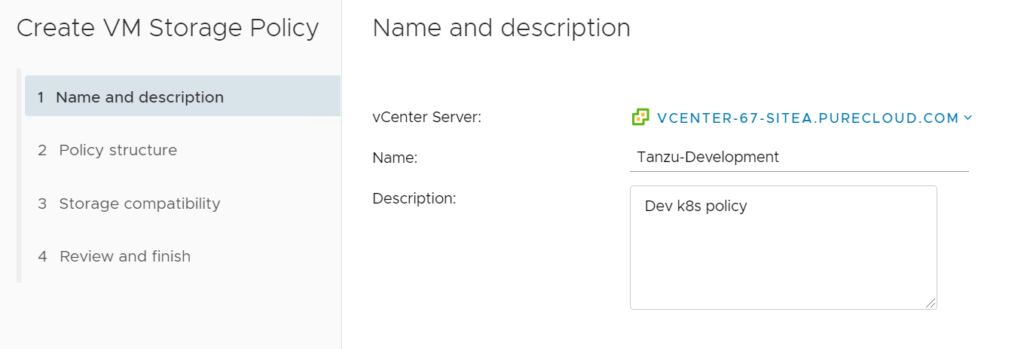

The first policy I will create is for my development/testing storage. I will call it Tanzu-Development.

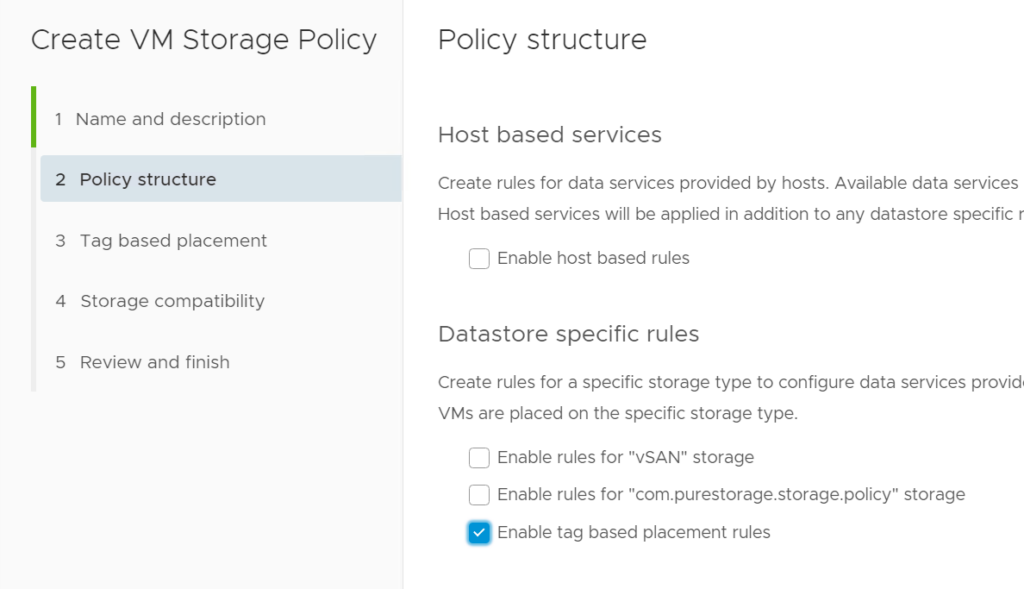

It will have tagged based rules only:

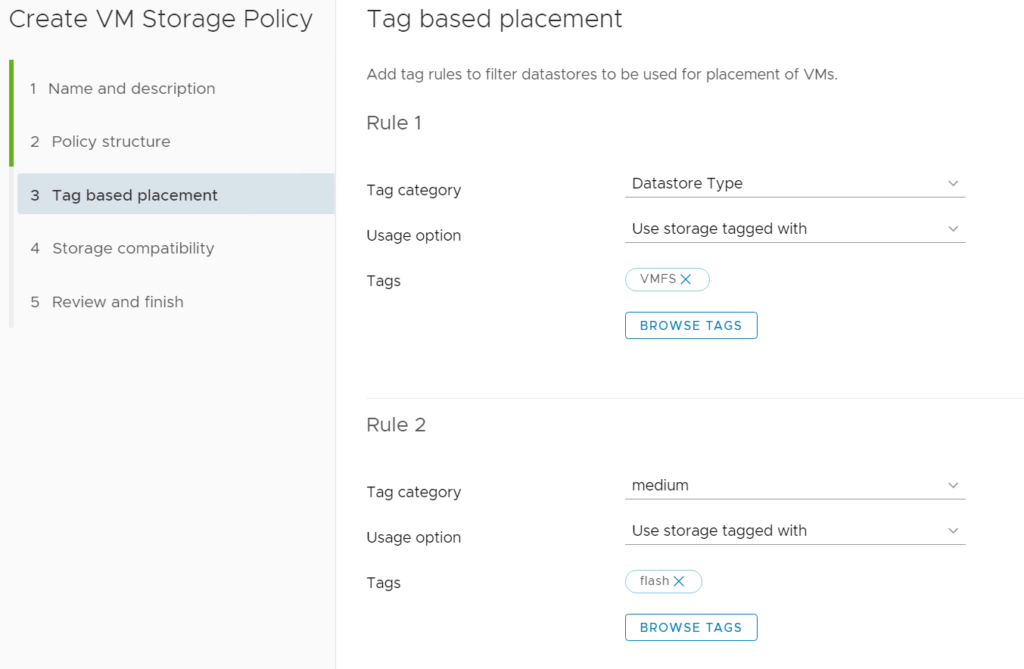

Two rules: it should be tagged with datastore type/VMFS and medium/flash:

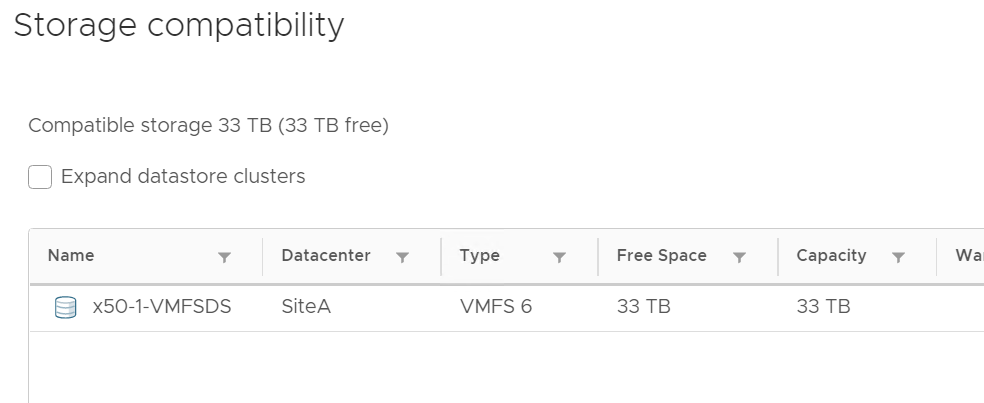

Hitting Next, you can see only that VMFS is compatible. When this policy is used, only storage compatible will be valid.

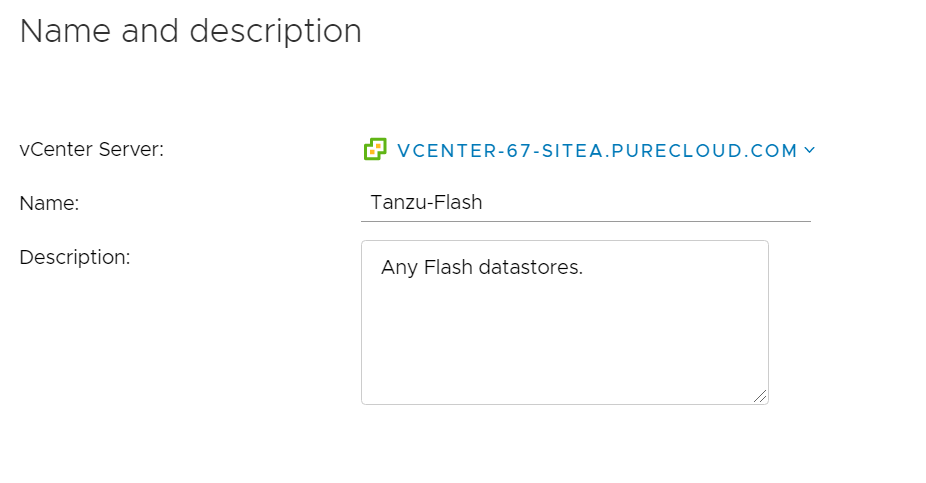

Now I will create another policy called Tanzu-Flash. This is for the group of containers that don’t care about underlying features, just that the storage is fast, so flash:

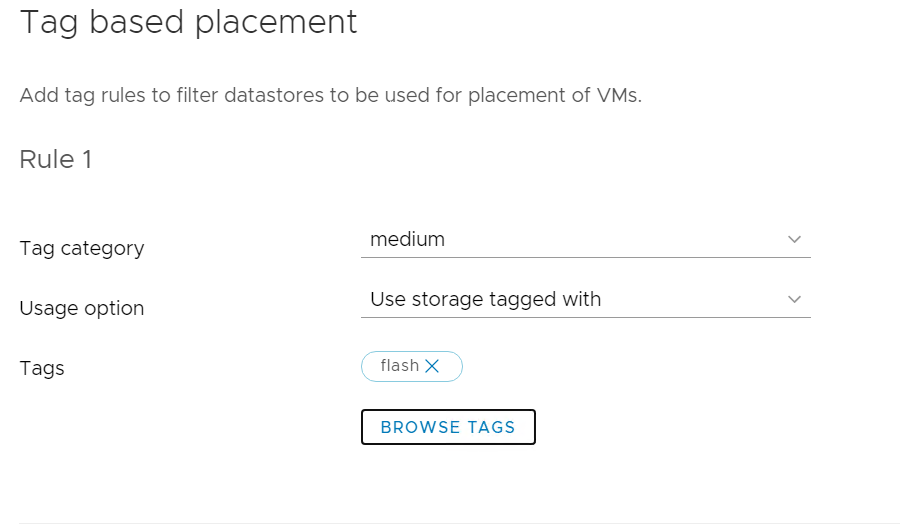

So this will only have one rule: it must be tagged with medium/flash:

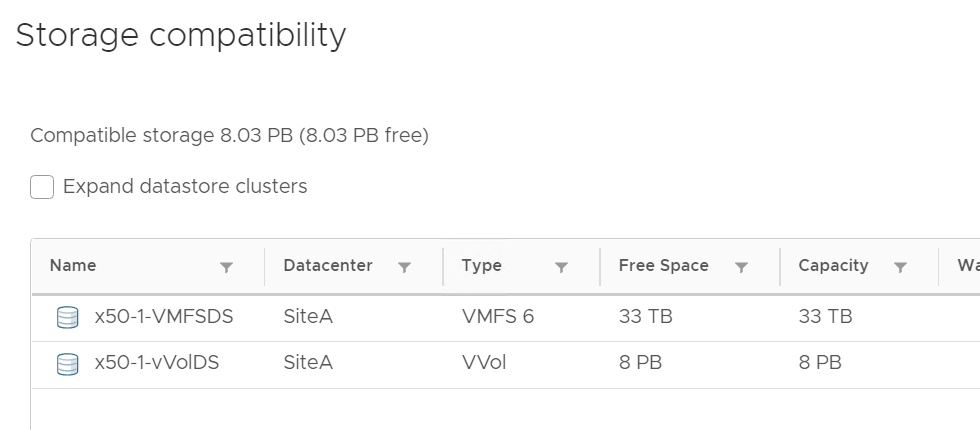

As you can see both the vVol datastore and the VMFS are valid:

vVols

Now tags are great. They are infinitely flexible. Though there is a downside… Are the tags even correct? Were they put on the right datastores in the first place? If they were have any changes occurred that make them no longer accurate? They are flexible, but not intelligent, so you do need to take care.

This is where vVols come in. Capabilities are not tags–the features and configuration is entirely communicated from the array to vCenter. If something changes that no longer makes it accurate, vCenter is informed and that datastore will no longer be valid. If something changes that makes it valid again, it will be. The features are not about how the datastore is configured but about what the array that owns it offers up as features. What the array can do with storage created on it and/or how the array is configured right now.

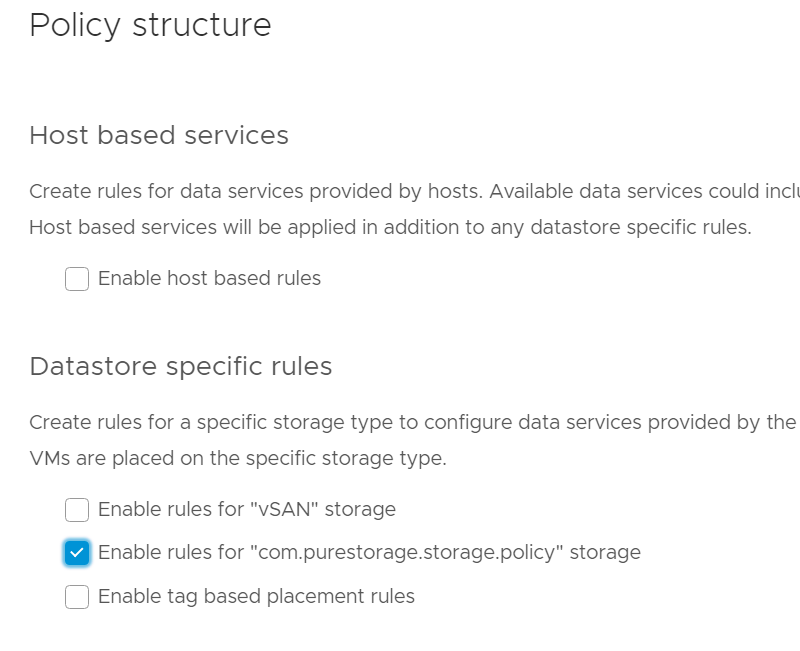

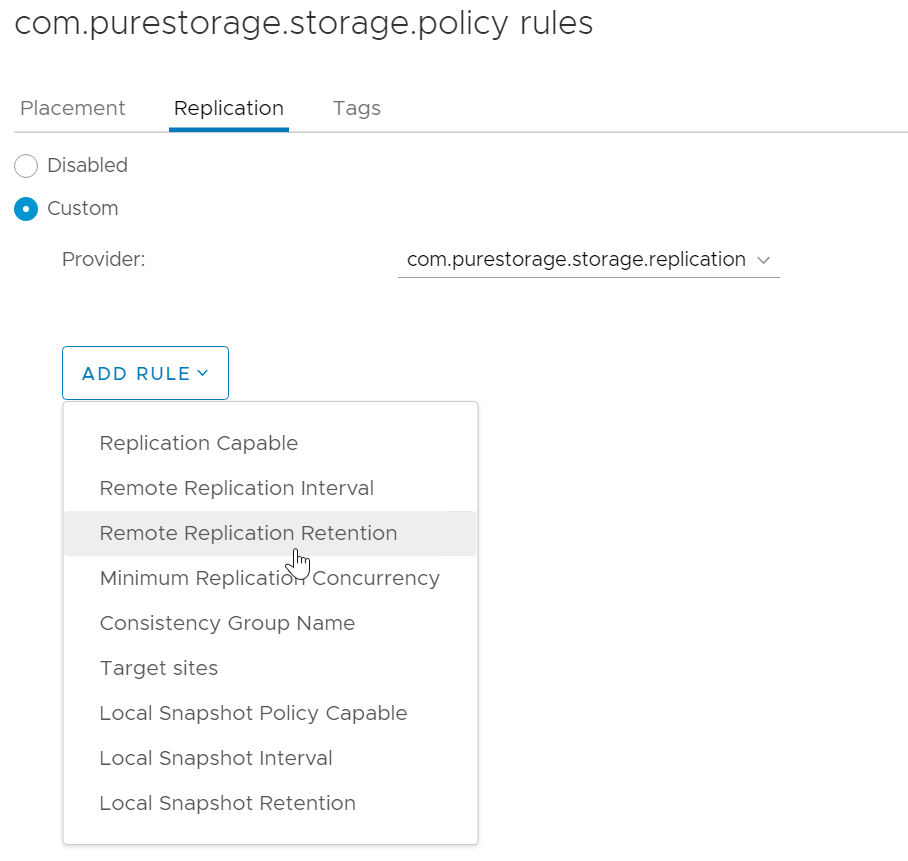

This time I choose the Pure Storage policy type.

There are a lot of options I can choose:

But I will keep it simple for this walkthrough.

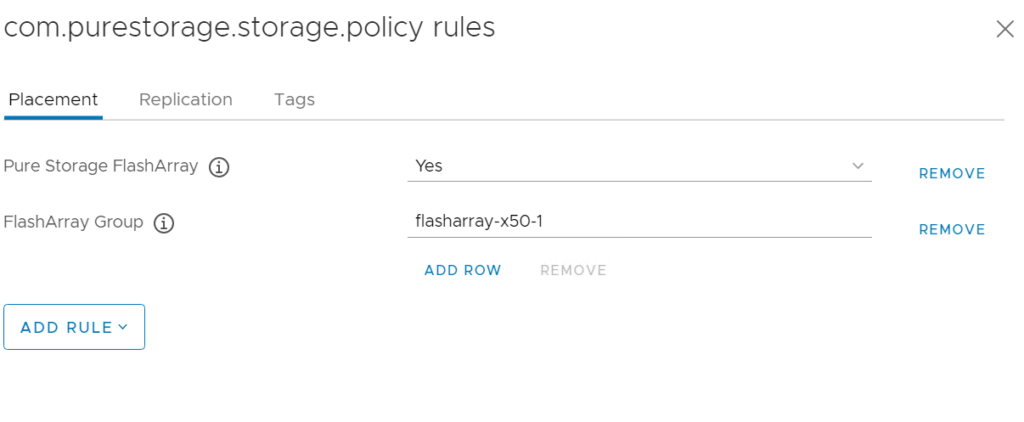

This says: only datastores on a Pure Storage FlashArray are valid. So this implies flash. Also, that only datastores from a specific FlashArray are valid, in this case one called flasharray-x50-1.

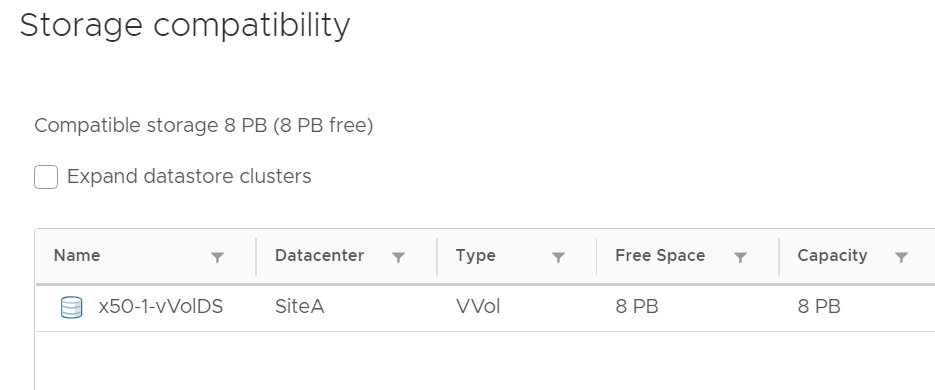

Since these are vVol features it also implies vVols only. This will filter out any non-Pure vVol datastores, non-vVol datastores, and datastores not on this specific array.

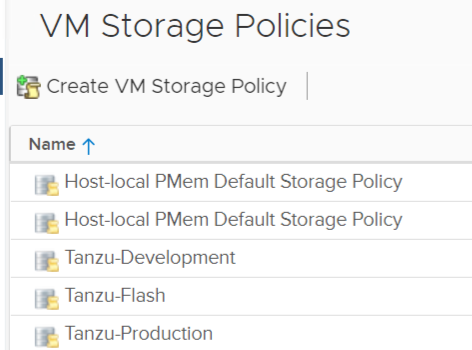

So now I have three “Tanzu” storage policies:

In the next post, let’s instantiate these policies as storage classes within Tanzu.