I just posted about some new cmdlets here:

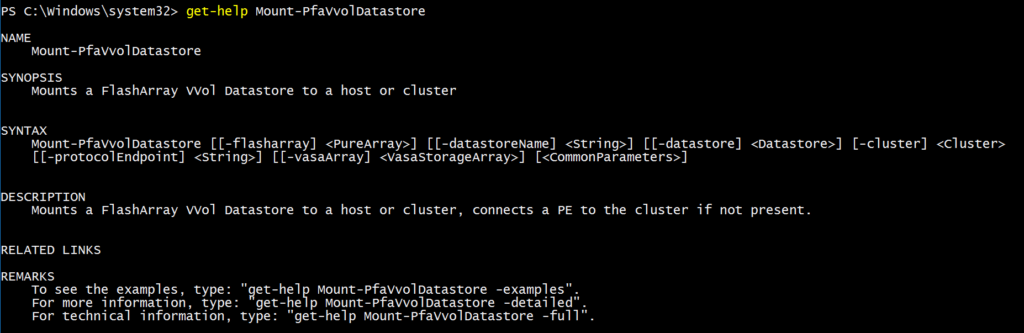

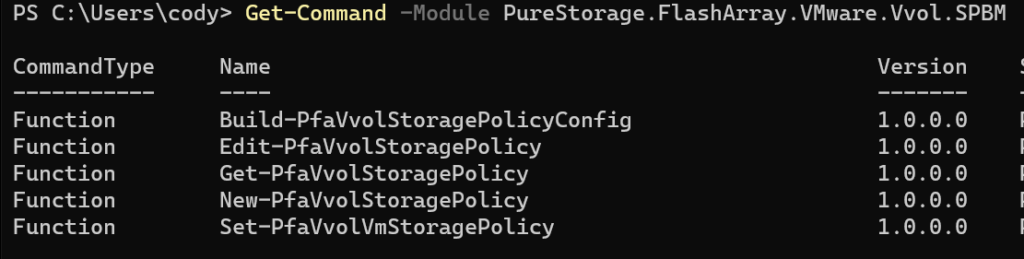

Also in that release are a few more cmdlets concerning storage policy creation, editing, and assignment. They were built to make the process easier–the original cmdlets and their use is certainly an option–and for very specific things you might want to do they might be necessary, but the vast majority of common operations can be more easily achieved with these.

As always, to install run:

Install-Module PureStorage.FlashArray.VMware

Or to upgrade:

Update-Module PureStorage.FlashArray.VMware

These modules are open source, so if you just want to use my code or open an RFE or issue go here:

https://github.com/PureStorage-OpenConnect/PureStorage.FlashArray.VMware/

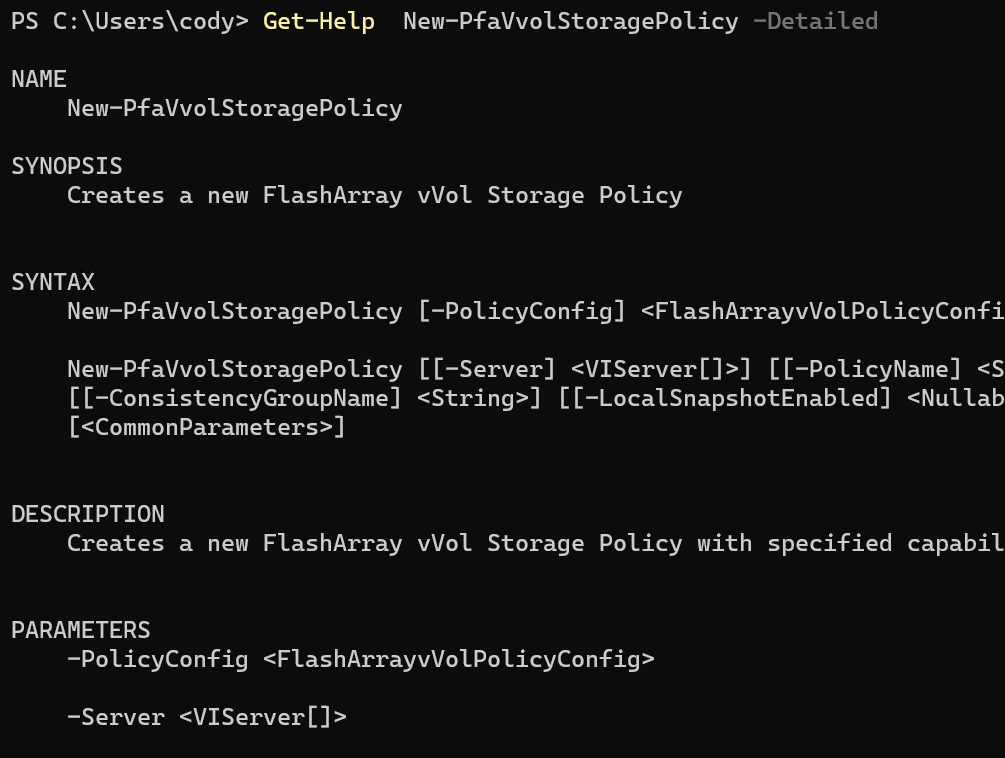

For detailed help on a cmdlet, run Get-Help