Note: This is another guest blog by Kyle Grossmiller. Kyle is a Sr. Solutions Architect at Pure and works with Cody on all things VMware.

In VMware Cloud Foundation (VCF) version 4.1, vVols have taken center stage as a Principal Storage type available for Workload Domain deployments. This inclusion in one of VMware’s premier products reinforces the continued emphasis on vVols and all the benefits that they enable from VMware. vVols with iSCSI is particularly exciting to us as this is the first instance of the iSCSI protocol being supported as a Principal Storage type within VCF. We at Pure Storage are honored to have had a little bit of influence over this added functionality by serving as a design partner for this new feature and we are confident you are going to like what you see!

Someone who is using VMFS datastore with VCF today might ask themselves ‘why vVols’? This is a great question deserving of an expansive answer beyond this blog post. Fundamentally, though, using vVols enables you to fully use the FlashArray in the way it was intended. By leverage VASA (VMware API for Storage Awareness) you gain far more granular control and monitoring abilities over your individual VMs. Native FlashArray capabilities such as snapshots and replication are directly executed against the underlying array via policy-driven constructs. Further information on these and other benefits with vVols are available here.

Using vVols as Principal Storage is a lot like the methods VCF customers are used to for pre-existing Principal Storage options. Image an ESXi host, apply a few prerequisites to it, commission it to SDDC manager and create Workload Domains. Deploying Workload Domains with VMware Cloud Foundation automates and takes all the guesswork out of deploying vCenter and NSX-T for modern use cases such as Kubernetes via Workload Management.

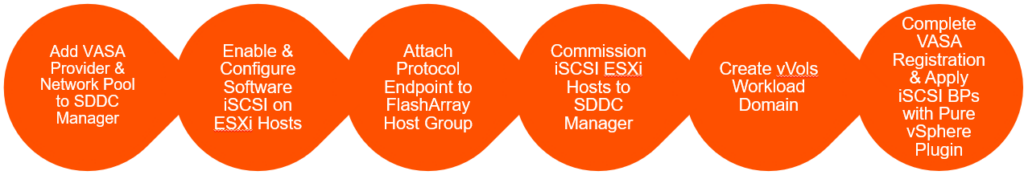

Stepping into some specifics for a moment; here’s the process on how to use FlashArray iSCSI and vVols for VCF Workload Domains:

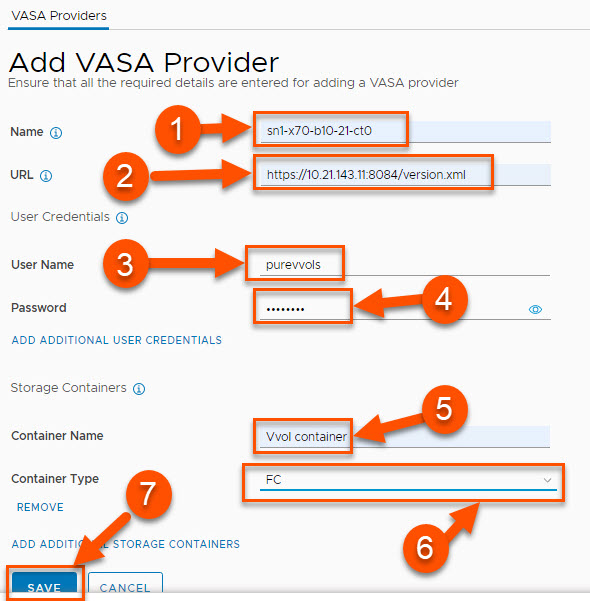

The most fundamental update to SDDC Manager to allow vVols is the capability to register a VASA Provider. In the below screenshot and following detailed information, we show an example of how you can add a FlashArray using another block protocol: Fibre Channel:

- Provide a descriptive name for the VASA provider. It is recommended to use the FlashArray name and append it with -ct0 or -ct1 to denote which controller the entry is associated with.

- Provide the URL for the VASA provider. This cannot be the management VIP of the array. Instead this field needs to be the management IP address associated with one of the controllers. The URL also is required to have the VASA port and version.xml appended to it. The format for the URL is: https://<IP of FlashArrayController>:8084/version.xml

- Give a FlashArray user name with the arrayadmin role. The procedure for how to create such a user can be found here. While the pureuser account can be used, we recommend creating and using a separate FlashArray user for VASA operations.

- Provide the password for the FlashArray username to be used.

- Container Name must be Vvol container. Note that this value is case-sensitive.

- For Container Type, select FC from the drop-down menu to use Fibre Channel.

- Once all entries are completed, click Save.

Obviously, there’s a lot more to share here so we will be expanding on this substantially in the very near future on our VMware Platform Guide site.

Rounding out this post, I’m happy to show a demo video of just how easy it is to deploy a FC+vVols-based Workload Domain with VMware Cloud Foundation.