VVols have been gaining quite a bit of traction of late, which has been great to see. I truly believe it solves a lot of problems that were traditionally faced in VMware environments and infrastructures in general. With that being said, as things get adopted at scale, a few people inevitably run into some problems setting it up.

The main issues have revolved around the fact that VVols are presented and configured in a different way then VMFS, so when someone runs into an issue, they often do not know exactly where to start.

The issues usually come down to one of the following places:

- Initial Configuration

- Registering VASA

- Mounting a VVol datastore

- Creating a VM on the VVol datastore

I intend for this post to be a living document, so I will update this from time to time.

One more thing to note, this is all done with the VVol implementation on the Pure Storage FlashArray. So if you are using a different vendor, exact solutions may vary somewhat. But they should give you a general idea on where to start if you are not using Pure regardless.

NOTE: From this point on, I am going to assume you are (for whatever reason) not using our vSphere plugin to set things up, or have run into issues and you want to/need to manually do it. Or you used it and it failed–you can refer to below to see the reasons why.

Initial Configuration

The initial configuration (environmental configuration) is often a cause.

For the FlashArray, we support only VASA version 3–so you must be on vSphere 6.5 or later end to end. This means vCenter 6.5 and ESXi 6.5. Not every host in vCenter must be on 6.5 of course, but the hosts that you want to use VVols must be. So check this first.

Furthermore, you must be on Purity 5.x or later to get VVol support. If your array is on an older release, contact Pure Storage support to upgrade.

Registering VASA

On the FlashArray, the VASA providers are built into the controllers so there is no need to install or configure anything, just as long as you are on Purity 5.x you are good to go.

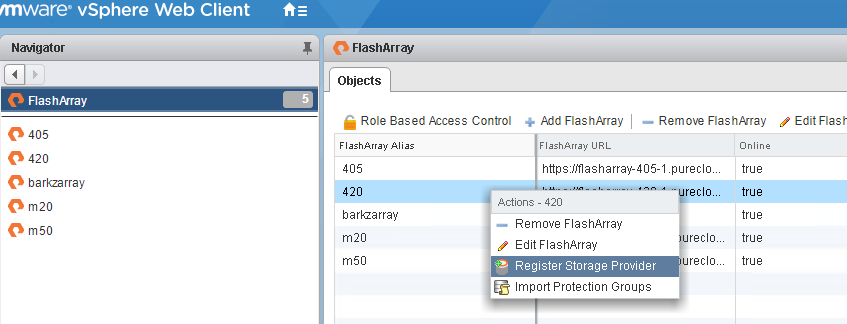

But you do need to register VASA with vCenter–you can do this manually if you want, or you can use tools like our vSphere Plugin or our vRealize Orchestrator plugin.

If this fails, there are few possible reasons.

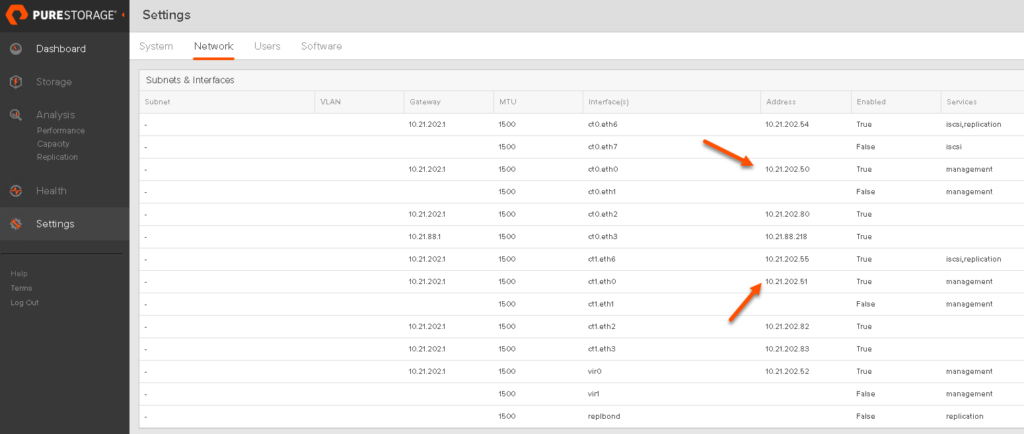

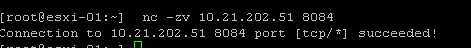

First off your vCenter must have IP management connection to both of the FlashArray controllers–this means they need to be able to reach the management IPs over TCP port 8084. There is a fairly simple way to check this, first off get the management IPs of your FlashArray, through the FlashArray GUI or whatever, generally this will be CT0.ETH0 and CT1.ETH1:

So for my first VASA provider it is 10.21.202.50.

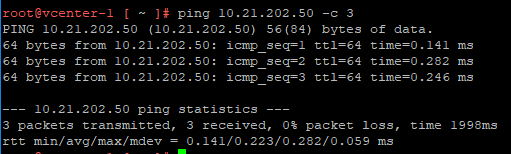

If I SSH into my vCenter I can check for connectivity. The first option is just regular network connectivity, simple solution is a ping.

If this fails, you have some type of networking issue between vCenter and your array management ports. VLANs, or firewall.

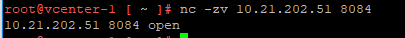

If that works, but it still fails to register, also confirm it can get to the VASA provider over the right port which is 8084 via TCP. You can use the NC command for this:

I recommend adding the -v parameter so it actually reports the result. If it is anything but open, then there is a firewall issue between vCenter and the FlashArray. Verify that connectivity within your network.

If you are not using the plugin, the issue could be one of those, or you might just be typing the address incorrectly, remember it needs to be in the form of

https://<IP of controller ETH0>:8084

Do not use the virtual IP, do not use a DNS name.

Mounting a VVol Datastore

The next most common issue is that a VVol datastore does not mount properly.

vSphere Plugin Fails to Mount Datastore

You can use the vSphere plugin to do this or directly use vCenter. The plugin is the recommended option as it automates a few steps, most importantly presenting the protocol endpoint. The PE is what provides physical access to the array for VVols (the VVol datastore is essentially just a capacity limit).

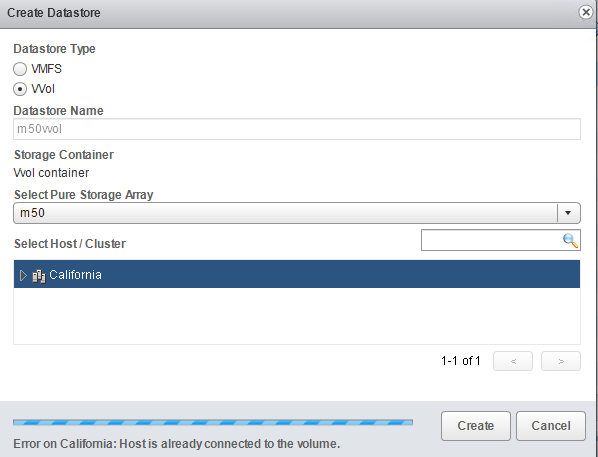

If the plugin fails with the following error:

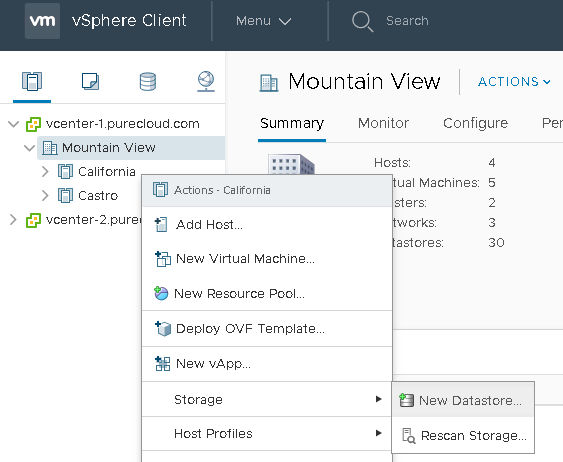

That means that the protocol endpoint has already been connected to the corresponding host on the FlashArray. In this case you do not need to use the plugin to mount the VVol datastore, you can just do it manually, by right-clicking on the host or cluster and choosing New Datastore.

Let’s look at some issues that can occur with manual mounting of VVol Datastore.

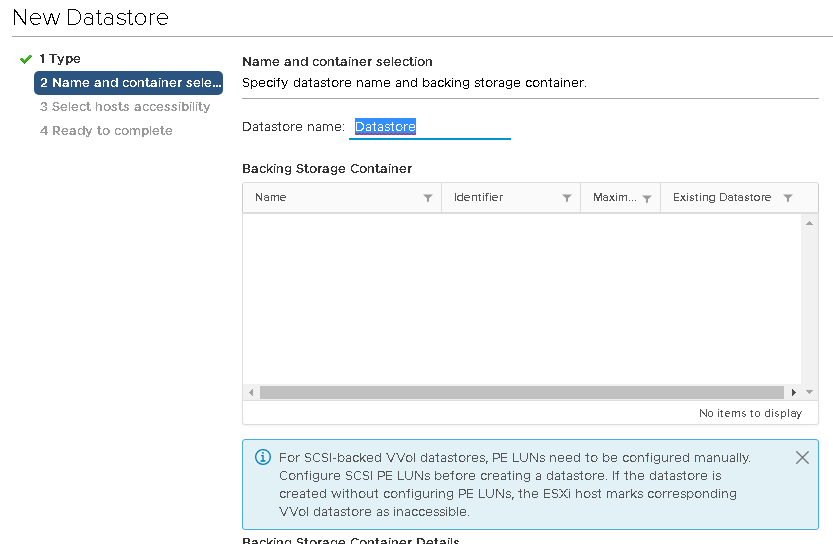

VVol Datastore does not show up

When you click on New Storage in the vSphere Client and under the VVol Datastore listing, nothing shows up, this means one of two things:

- You did not register the VASA provider(s)

- You already mounted the available VVol datastores

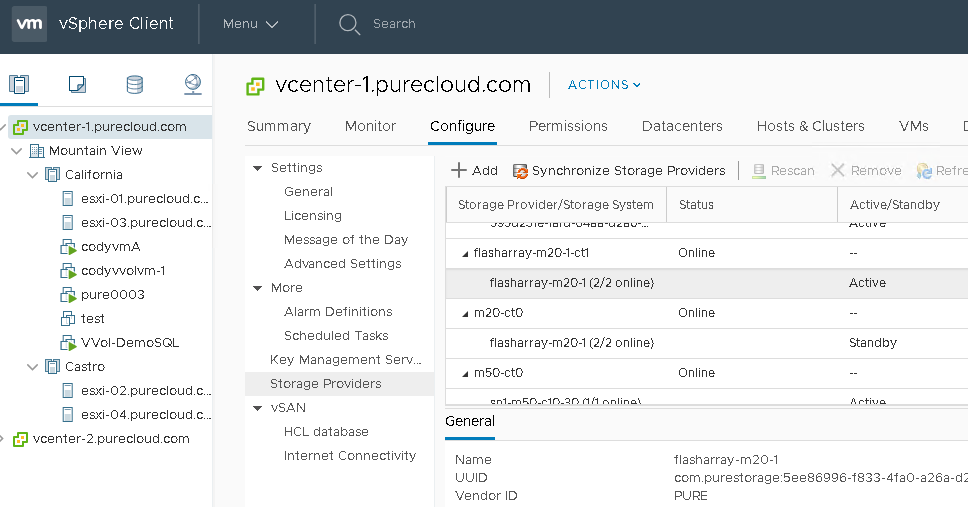

In the case of the first issue, just go to your Hosts and Clusters view, then click on your vCenter, then the Configure tab, and then Storage Providers. Make sure that the respective VASA providers are registered and online.

VVol Datastore is Inaccessible

In order for an ESXi host to be able to access and use a VVol datastore, you need two things:

- The host must have a protocol endpoint from that array presented to it.

- The host must be able to talk to the VASA provider

If the host does not have either of these things, it will not be able to provision to that VVol datastore–and the VVol datastore will be marked as inaccessible to that host.

A host may not have access to a protocol endpoint for a variety of reasons.

Is the PE actually connected?

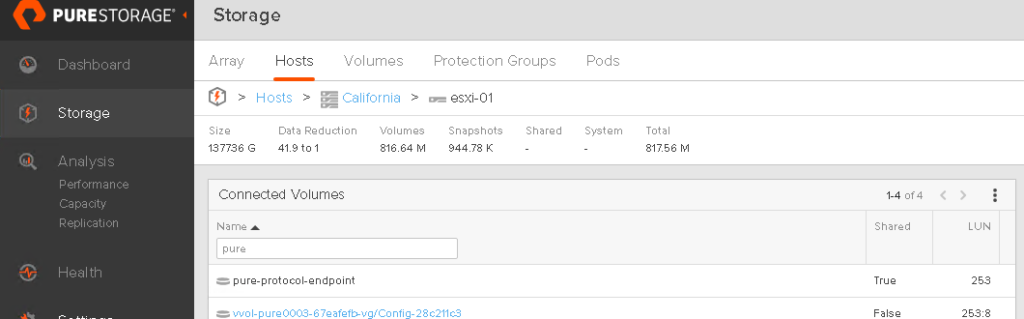

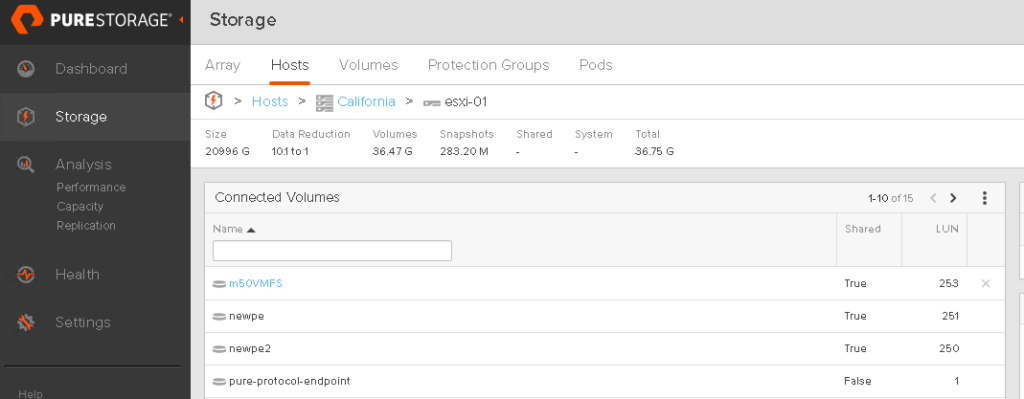

First off, it may not be actually connected to that host on the array. Ensure that it is, go to the Pure GUI (or CLI or whatever) and verify this. It should be listed under “Connected Volumes”

Generally, this volume will be called pure-protocol-endpoint, which is the automatically created one. Though some users might create their own (or rename this). You can tell if you have one or more PEs presented to a host if the volume name is black and not clickable.

If one is not connected, connect it. Currently you can only connect a PE through the CLI or REST (not the GUI). CLI connection options are below. Either connect it to a host or the entire host group (preferable).

purehost connect <host name> <PE name>

OR:

purehgroup connect <host group name> <PE name>

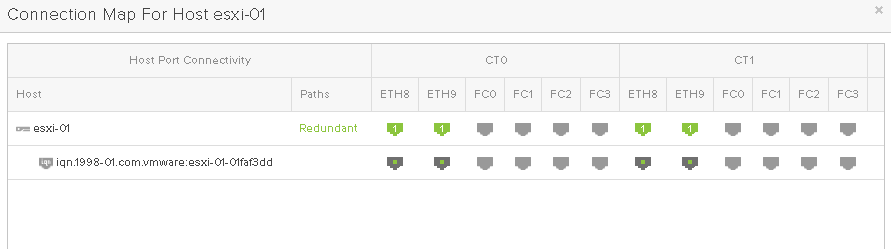

Is the host connected?

Just because the host has a PE connected to it on the FlashArray, does not mean things are ready. You want to make sure that things are physically connected. So for Fibre Channel–is zoning done? For iSCSI, are the iSCSI targets added to the ESXi host?

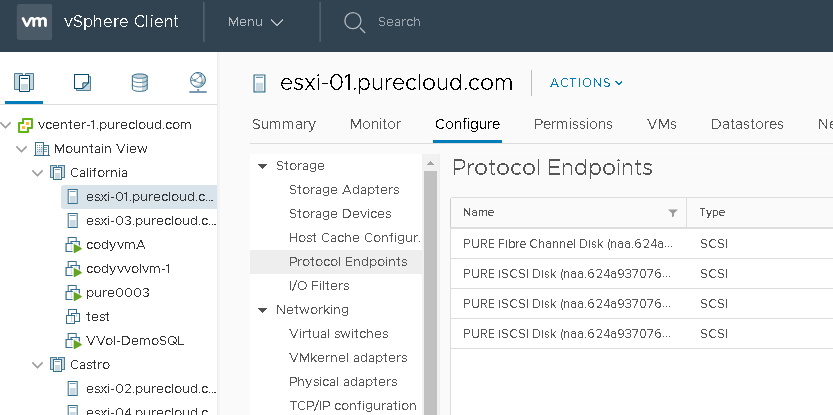

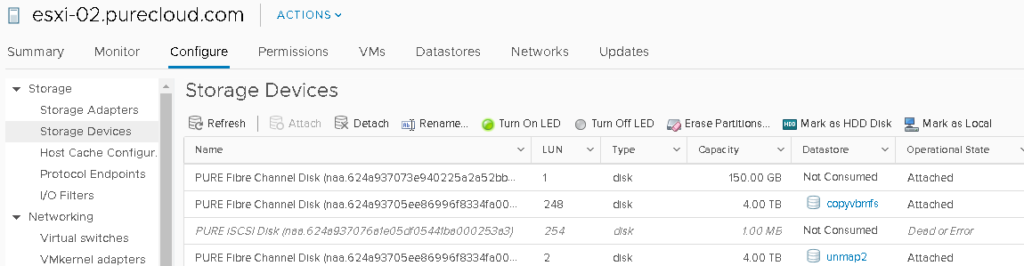

Is the PE properly seen in ESXi?

If the above things are good, you need to make sure that the protocol endpoint is configured correctly in ESXi. By default, it should be, but every so often things are changed.

If a protocol endpoint is in use, it will be listed under the Protocol Endpoints panel in the vSphere Client for a host.

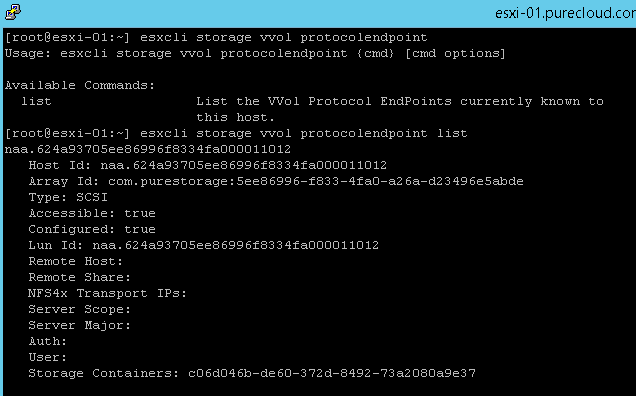

Or via the CLI in ESXi:

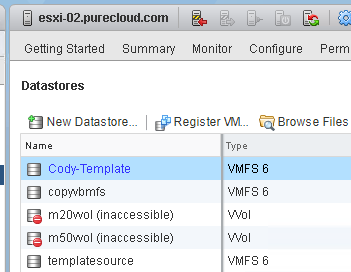

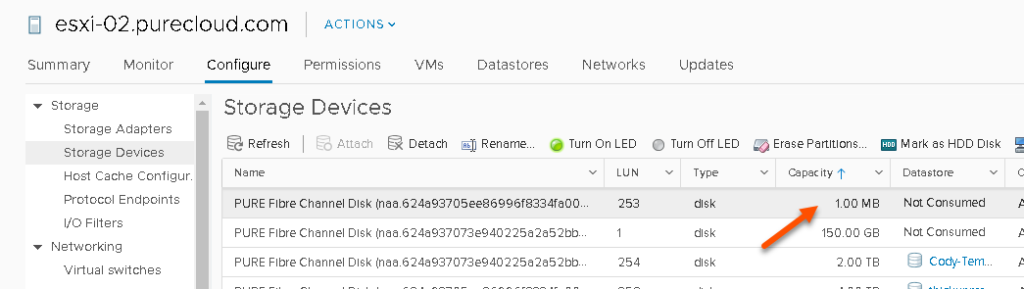

esxcli storage vvol protocolendpoint listIf it is not in use (meaning there is not a VVol datastore on that host from the same array as that PE), they will not be listed in either place. You can verify it is present though by looking in the standard storage view:

A good way to quickly look if it is there is to sort by size, PEs will be 1 MB.

Also make sure the PE is in the “Attached” state. If it is “Dead or Error” this means access to the PE has been lost, so try a rescan, or check zoning or iSCSI config. Or possibly reboot ESXi.

Can’t Create a VM on the VVol datastore and/or it reports as 0 Size for Capacity

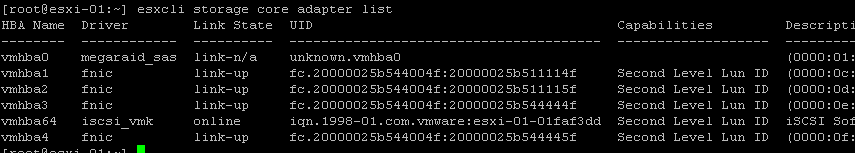

If all of this above is good, then another possibility is the HBA drivers are out of date.

In the ESXi host, run esxcli storage core adapter list to see if the capability is reported as “Second Level Lun ID”. If it is not, you need to update your HBA drivers in ESXi:

If these are not updated, ESXi will not be able to see sub-luns (VVols) in the SCSI path.

Beyond this there are a few other possibilities:

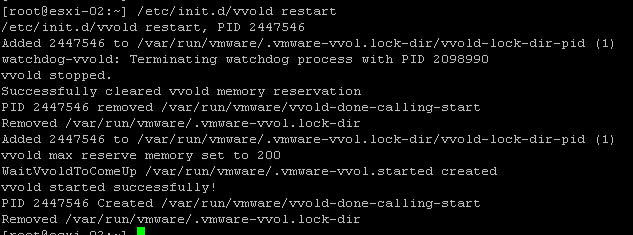

- The vvol daemon needs to be restarted in the ESXi host. You can do this by running the following in ESXi via SSH:

/etc/init.d/vvold restart

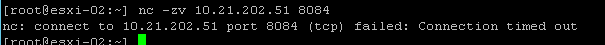

- The ESXi host cannot talk to the VASA provider, use NC to make sure it can talk to it via port 8084 like above with vCenter:

nc -zv <vasa IP> 8084Note that if you get an error like below:

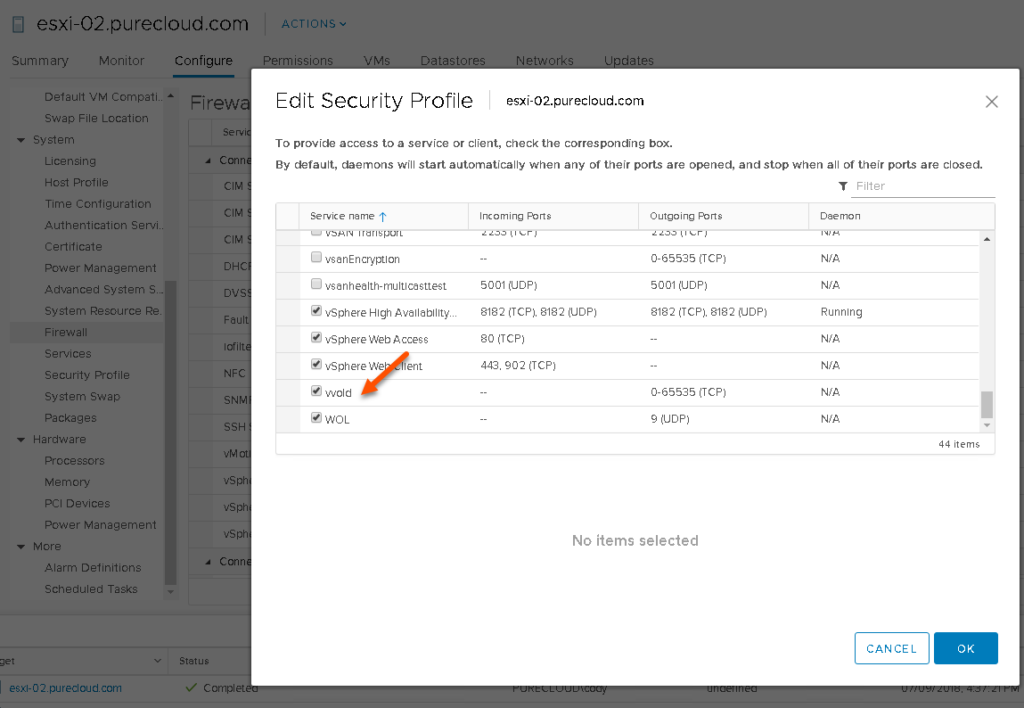

it might mean that some external firewall is blocking the port, it could mean that ESXi is blocking traffic to that port. This is the default behavior if no VVol datastores have been connected to that host–ESXi opens it the first time a VVol datastore is mounted and closes after the last one is unmounted. If this is the case, enable the vvold firewall rule in ESXi then run the nc command again:

Lastly, some issue with VASA information not being passed down to ESXi. The ESXi host might report the provider as null in the vvold log. This issue is being worked on by VMware. Regardless, if this is the case, follow the refresh cert steps here:

Nice work Cody,

We ran into the issue where the vvol was created but was showing inactive in vsphere ( Vsphere 6.5 update 2), and also was showing 0 bytes avail.

after checking the vvold.log we found a SSL cert error. We had to follow these steps to get it to work:

*Put ESX host into Maintenance mode * – if you don’t this process will not work.

SSH to host

Run the following Commands

cd /etc/vmware/ssl

mv rui.crt orig.rui.crt

mv rui.key orig.rui.key

/sbin/generate-certificates

Once that is complete reboot esx host.

Reconnect esx host (you will be prompted to accept the new cert for the host when reconnecting to vcenter)

SSH to host

Run the following command

/etc/init.d/vvold ssl_reset

Then run this command to see if the host can see the Pure PE

esxcli storage vvol protocolendpoint list

If you don’t see it listed check the vvold.log

cd /var/log

less vvold.log

Good Luck!

Glad to hear it cleared up! Though I am curious–did you try /etc/init.d/vvold ssl_reset without doing the other steps–usually that works. What version of vSphere are you on as well? The issues that causes this have been mostly cleared up in 6.7

We created a VVol 14 TB already takes all the space in the Nimble storage and there are VM’s. Is it possible to shrink current VVol and create another one.

Thanks

Hello Jay,

The VVol folder itself is just a storage container with a limit you set. All the VMs that get provisioned are pretty much thin provisioned. You should be able to resize (shrink or grow ) the VVol storage container (Folder) if required. Please reach out to me if you require further help or questions.

Thanks

Bharath ( TME HPE Nimble Storage )

Thanks Bharath for answering!

Thank you Cody!

I had just installed 2 new hosts in a 6.7U3 cluster and I could not get the PE to show up on the host. Thanks to you, I was able to go down the list of troubleshooting steps and of course, it was the last step in your blog that fixed it. I knew I had something when I couldn’t refresh the CA certificate on the host(bug) that it might be something with the VMCA. I installed the latest patch on the host and this time the CA Cert refreshed successfully. 5 seconds later, the PE pop up on the host.

Thanks again!

Great to hear!