vSphere 6.7 core storage “what’s new” series:

- What’s New in Core Storage in vSphere 6.7 Part I: In-Guest UNMAP and Snapshots

- What’s New in Core Storage in vSphere 6.7 Part II: Sector Size and VMFS-6

- What’s New in Core Storage in vSphere 6.7 Part III: Increased Storage Limits

- What’s New in Core Storage in vSphere 6.7 Part IV: NVMe Controller In-Guest UNMAP Support

- What’s New in Core Storage in vSphere 6.7 Part V: Rate Control for Automatic VMFS UNMAP

- What’s New in Core Storage in vSphere 6.7 Part VI: Flat LUN ID Addressing Support

A while back I wrote a blog post about LUN ID addressing and ESXi, which you can find here:

ESXi and the Missing LUNs: 256 or Higher

In short, VMware only supported one mechanism of LUN ID addressing which is called “peripheral”. A different mechanism is generally encouraged by the SAM called “flat” especially for larger LUN IDs (like 256 and above). If a storage array used flat addressing, then ESXi would not see LUNs from that target. This is often why ESXi could not see LUN IDs greater than 255, as arrays would use flat addressing for LUN IDs that number or higher.

ESXi 6.7 adds support for flat addressing.

ESXi 6.5 supports 512 devices and 6.7 supports 1,024, so this issue was more likely to crop up.

Let’s run through an example with ESXi 6.5.

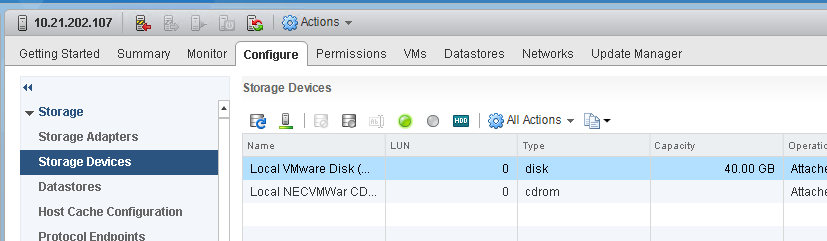

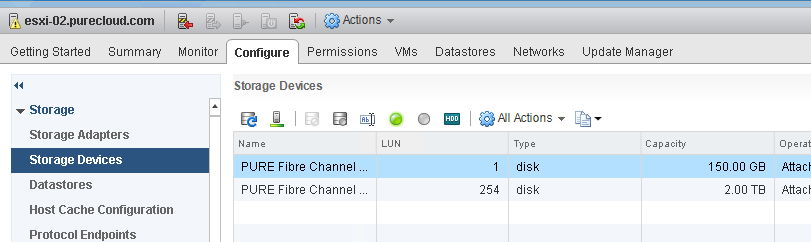

I have a host that does not see any SAN storage yet.

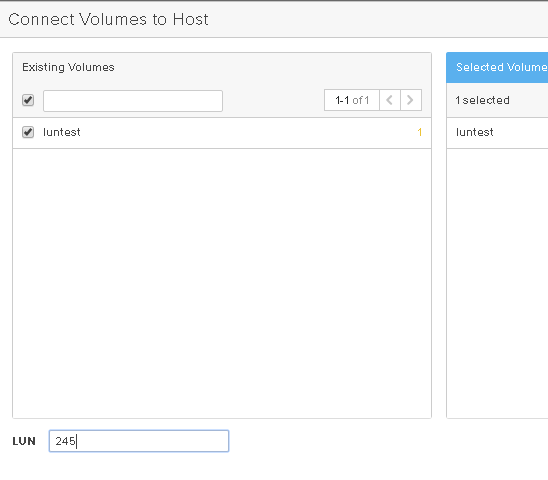

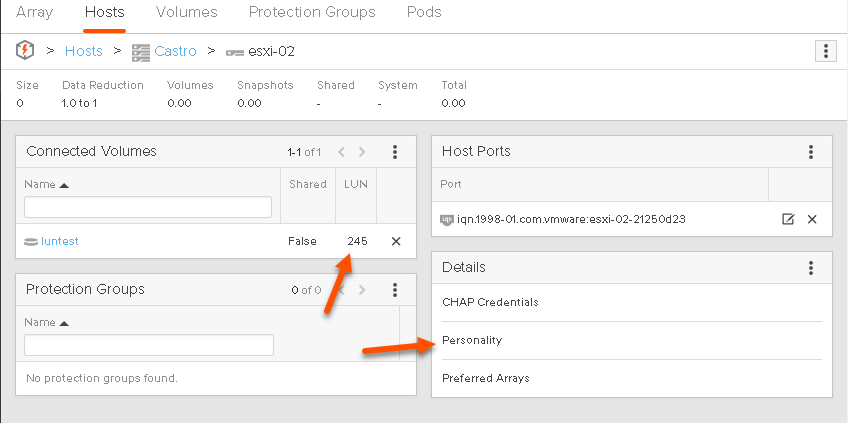

I will then present a volume at LUN ID 245 to my host from my array–I will use the default behavior which is to present via peripheral.

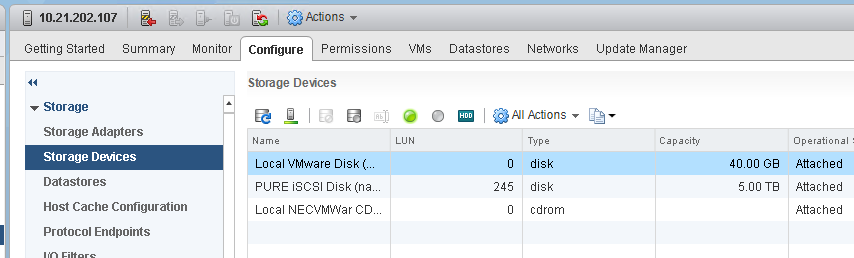

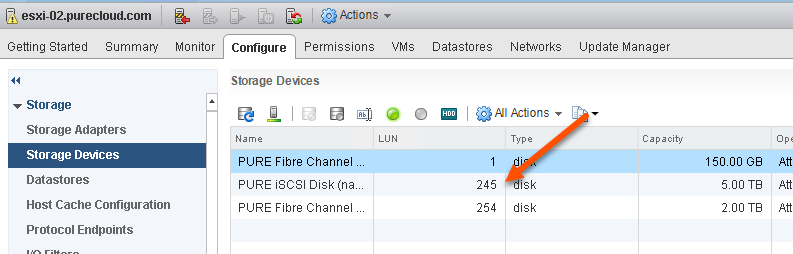

If I rescan my host I will see the volume with LUN ID 245:

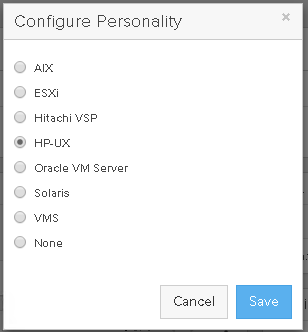

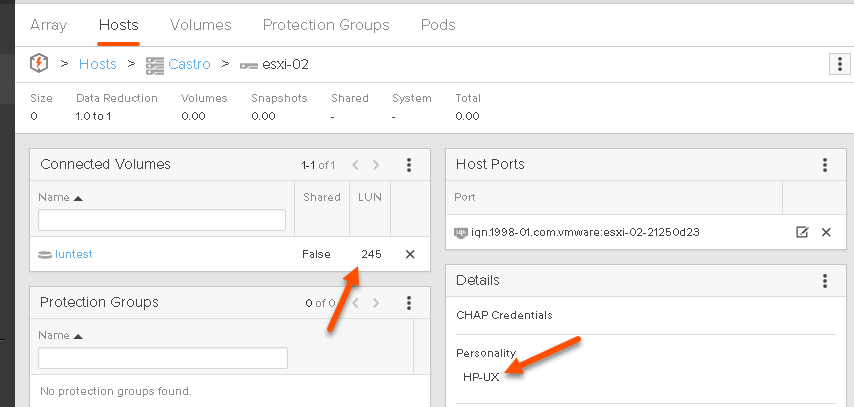

Now on my array I am going to force the LUN addressing scheme to use flat instead of peripheral–on the FlashArray I can achieve this by changing the host personality for my hosts initiators. The host personality for HP-UX does this as HP-UX requires LUN IDs (large or small) to be done via the flat method.

Side note:

DO NOT DO THIS ON YOUR ARRAY–NEVER ASSIGN A HOST PERSONALITY FOR THE INCORRECT HOST.

Once I remove and re-add the volume, the LUN is no longer visible, because ESXi 6.5 and earlier does not support flat addressing.

Let’s retry this test with ESXi 6.7.

I currently only have two LUNs presented to my ESXi 6.7 host.

Now I will add my volume to my host as LUN 245 with no personality set (so it will use peripheral).

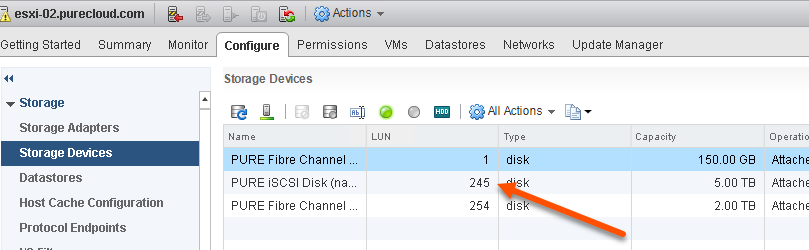

As expected my host sees it:

Now let’s re-present my volume via LUN ID 245 but this time with flat LUN addressing:

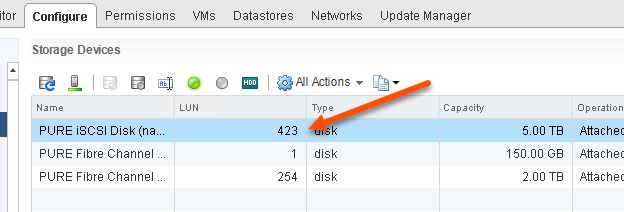

Then rescan:

It sees it now.

So if you had issues presenting LUNs (especially LUNs with larger IDs) ESXi 6.7 can now handle it.

Some Specifics for Pure Customers

So this is all interesting, but for Pure customers what if I want to present a LUN with larger LUN IDs?

For the FlashArray, the default behavior is that LUN ID 1- 255 uses peripheral and anything above that uses flat. So if I am not using 6.7 how can I get ESXi to see a larger LUN ID?

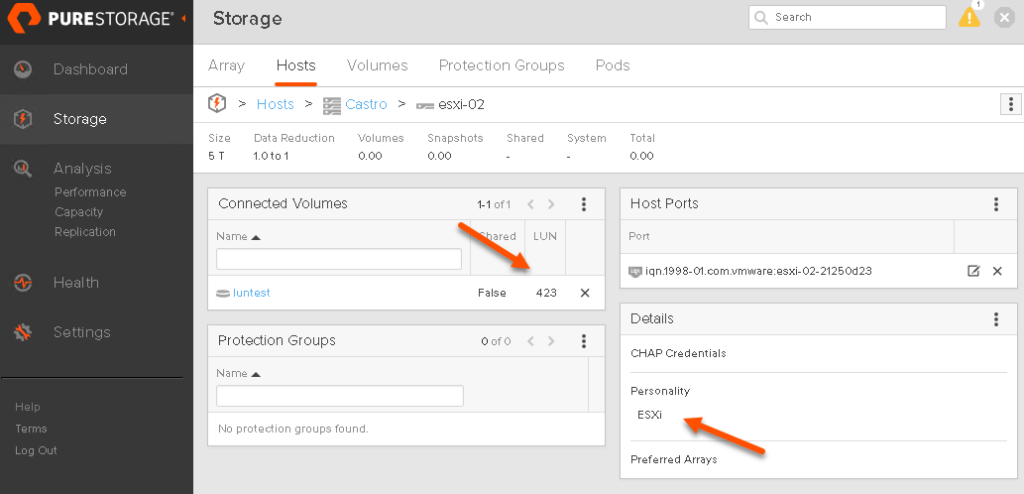

Well in Purity 5.1 we implemented a host personality for ESXi, that changes a few things, one of which is LUN addressing scheme. When set, we will use peripheral LUN addressing even for larger LUNs. So if you set the host personality to ESXi, you will be able to see larger LUN IDs:

After a rescan we see it:

So the question is, do I need to set this if I am using 6.7+?

Yes. First off it does more than just change LUN addressing schemes. It also changes some PDL behavior for ActiveCluster, so it is important for ActiveCluster users (active-active replication) to set. Furthermore, it is likely we will add more changes that go with this personality as time wears on, so it is still recommended to set it.

NOTE: Do not set this personality on a host while it is running active I/O to that array, set it only when the host is in maintenance mode or at least not using that particular array. This requirement will change in the future, but as of Purity 5.1.2, it is still a rule.

Excellent Article Cody!

It explains the details which are missing in purity release notes.

Although Purity 5.0.7 also includes the host personality support for configuring LUN IDs greater than 255 for ESXi hosts.