This is part 2 of this 7 part series. The series being:

- Mounting an unresolved VMFS

- Why not force mount?

- Why might a VMFS resignature operation fail?

- How to correlate a VMFS and a FlashArray volume

- How to snapshot a VMFS on the FlashArray

- How to mount a VMFS FlashArray snapshot

- Restoring a single VM from a FlashArray snapshot

This is a topic that I have looked into years ago, mainly in ESX 4.0 when they changed how it handled unresolved VMFS volumes by allowing it to be done selectively. I have had some customers working with array-based snapshots recently and have run into issues that prevent them from mounting them, or mounting them in the way they want. The problem is that I forgot most of the caveats from that testing because it was way before I started this blog. And as that quote popularized by Mythbusters’ Adam Savage says:

So let’s write it down to so I can’t forget it all again. That show is on its last season right now. I will certainly miss it! But anyways, I digress. Force mounting. Don’t do it and here is why.

So why not force-mount? It sounds like it is a more convenient option and well I suppose it is in the short-term.

A benefit of force-mounting is that the objects see it as the same volume as the original. So let’s suppose a VM was running on a volume on an array and someone accidentally (or aliciously!) deleted the volume, that VM will become inaccessible. Luckily you have a snapshot, so you take that snapshot and present it back to the same ESXi host. But it has a different serial number so there is a mismatch. If you resignatured it, you would need to unregister that inaccessible VM then browse the resignatured VMFS and register the VM new again. This process would make the VMFS as well as the VM look new again, so a lot of historical data for both objects would be lost. If you force-mount it, the UUID is the same (which is what VMs use to recognize its storage) the VM would see that its storage is back and can be booted back up without having to unregister and re-register etc.

But this operation has some limitations and some dangers that, in my opinion, override these benefits:

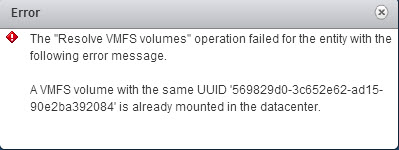

Situation 1: Original volume still being present blocks it

If the original VMFS is still mounted in that vCenter you cannot force mount it at all. The resignature process will fail. vCenter will see that volume already mounted and will report a pre-existing VMFS UUID error. If you run the following CLI command, you will see it cannot be force mounted with the specific reason.

[root@csg-vmw-esx3:~] esxcli storage vmfs snapshot list 569829d0-3c652e62-ad15-90e2ba392084 Volume Name: VMFSVolume VMFS UUID: 569829d0-3c652e62-ad15-90e2ba392084 Can mount: false Reason for un-mountability: the original volume is still online Can resignature: true Reason for non-resignaturability: Unresolved Extent Count: 1

If you attempt to force mount it anyways, it will fail with the following error

Since an object with the same UUID already exists in that vCenter this mount will not be allowed because duplicate UUIDs could cause all kinds of problems. Note though, a resignature will work here because it would mount with a new UUID.

Situation 2: vCenter will only force mount on one host

So let’s say you force mounted the volume in order to recover from an original loss or at a DR/test/dev site. When you create a VMFS the first time in a cluster you format it from one host, but all other hosts are rescanned in that cluster and if the physical device is presented to those hosts all other hosts will mount it automatically. Furthermore, if you resignature a volume the same thing happens; every that has access to the device will auto-mount it, because a resignature is kind of like creating a new VMFS in the eyes of managing (though of course a new VMFS is empty, a resignatured volume has data on it).

Force mount is different. You force mount the volume on one host but even if you rescan the other hosts it will still remain unmounted, because on the other hosts that volume is still seen as unresolved–the UUID is still a mismatch. If you then try and force-mount that volume within vCenter, vCenter will block it because that UUID is already mounted in that vCenter somewhere and it cannot guarantee it is not different data. So vCenter blocks the mount.

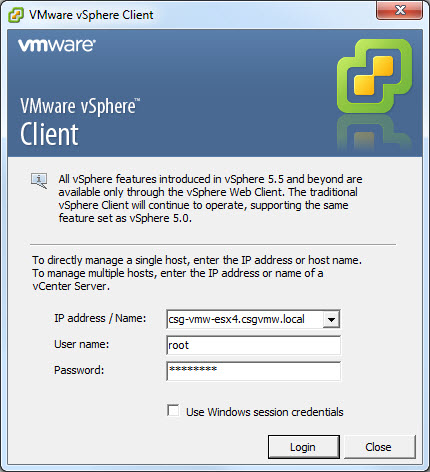

You can however login directly to ESXi host over CLI or the legacy vSphere Client and force mount it one at a time for each ESXi host. So while it is possible to present it to all of the hosts in one cluster as a force mount, it is tedious.

Situation 3: An accidental resignature causes data unavailability

This is the big one in my opinion. If you force mount a volume on one host, that volume could accidentally be resignatured by another host and the force-mounted volume will go inaccessible (and it’s VMs) because its UUID was changed by another host. Your VMs will go offline because it can no longer find its storage and you will have to follow that unregister/register procedure you might have been trying to avoid in the first place. For example:

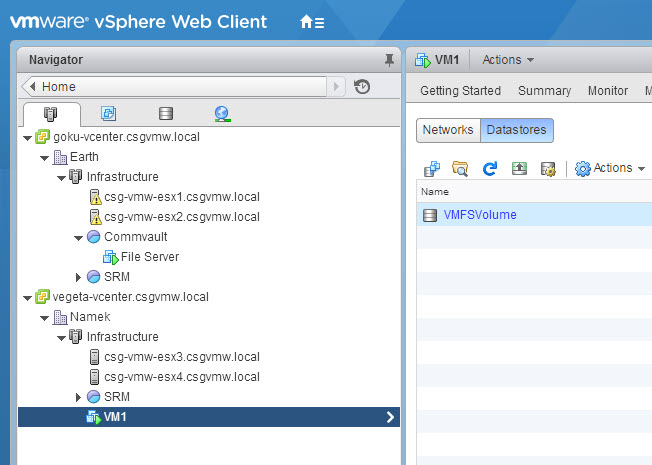

I have a VM named VM1 on my VMFS named VMFSVolume and that volume is force mounted.

The volume is only mounted on one ESXi host because like mentioned earlier vCenter will not allow me to force-mount it on more than one host. So I need to login directly to the ESXi server to force mount it on other hosts. So I login with the C# vSphere Client.

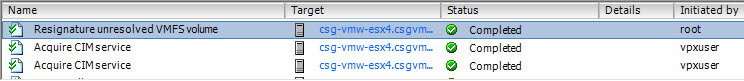

I mean to force mount, but accidentally click Assign New Signature which will resignature. This changes the UUID on the VMFS so any host using it will be affected by this resignature. Including the one that force mounted it using the original UUID.

You can see the resignature operation completed successfully on this host. Even though the first host has it force mounted with the original UUID and has a VM running on it, it does not prevent a new host from resolving the signature mismatch and giving it a new UUID.

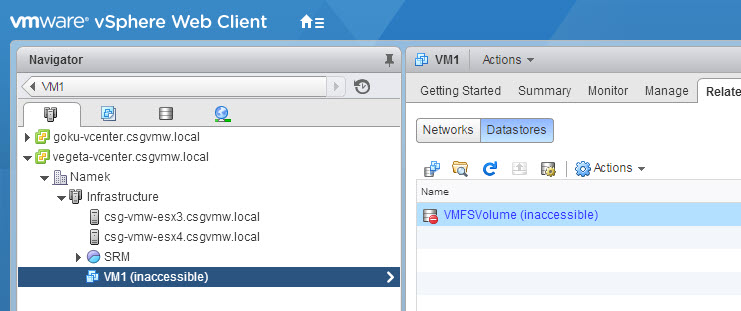

If we look back out our VM running on the datastore we just resignatured we see, oops, it is now inaccessible as is its storage since that UUID is gone.

So now we have to restore it somehow. Besides the accidental resignature due to a misclick, it can also happens in other ways such as the enabling of auto-resignaturing on an ESXi host that will force it to resignature any volume that it sees as unresolved immediately without user intervention. The hidden advanced host-wide setting (LVM.EnableResignature) can still be turned on by an admin or through some advanced settings directly available in Site Recovery Manager.

So there is more than one way this can accidentally happen.

Situation 4: A force mounted VMFS prevents resignaturing of additional copies

If you force mount a volume on any host in a vCenter, you will be unable to resignature additional copies of that VMFS volume in vCenter until that volume is gone or resignatured itself. In order to resignature additional copies you have to login directly one of the ESXi hosts that has access to the unresolved VMFS you want to resignature. You can resignature it once from there.

So this may not seem like a big deal, but a lot of 3rd party applications that manage array-based snapshots of VMFS volumes, like SRM or Commvault or our Web Client Plugin, use the vCenter API to mount volumes and they all use resignaturing. They will all fail to mount their volumes in the presence of a force mounted VMFS from the same source as their unresolved VMFS. Makes troubleshooting ugly. Which begs the question, is there a way to quickly identify a VMFS that has been force mounted?

Identifying force mounted volumes

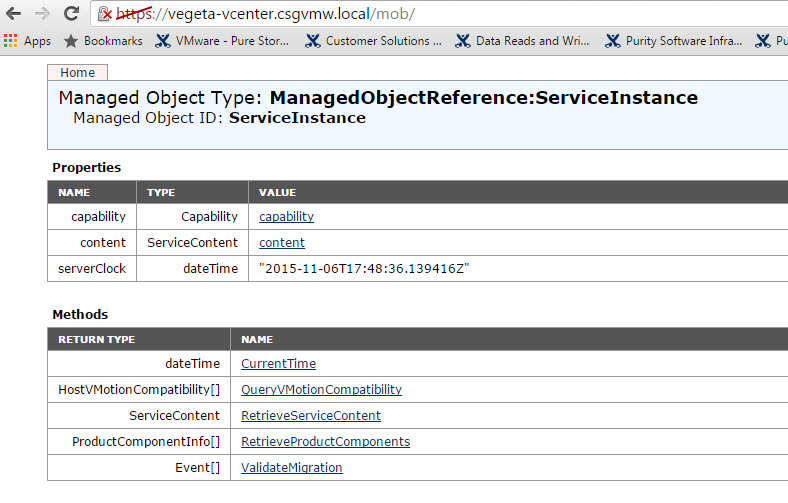

So this all begs the question, how do I locate volumes in my environment that are force mounted? Well this one took me a bit of time to figure out, but in the end it is pretty simple. In the Managed Object Browser for vCenter you can locate this information. I could not find it in the normal CLI or GUI. The MoB for vCenter can be found by taking your vCenter IP or FQDN and adding /mob to it. So my web address for MoB is:

https://vegeta-vcenter.csgvmw.local/mob

This takes you to a splash page where you can find all kinds of information…if you know where to look.

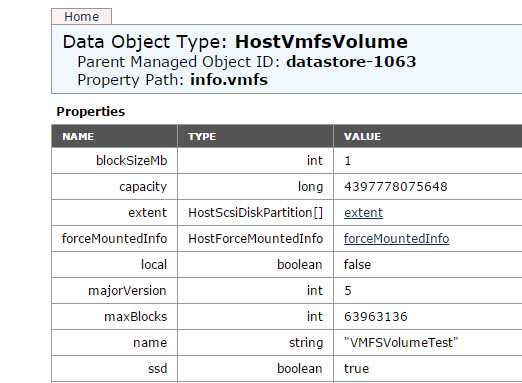

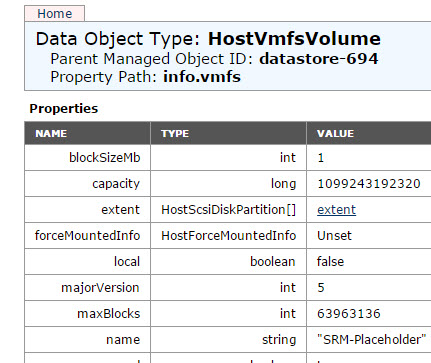

If you click on the “content” link then find under “root folder”, click on the object that looks like “group-d1 (Datacenters)”. Then in the ” childEntity” click on the datacenter in which you might have a datastore that is force mounted. Click on the datacenter, then you will see a listing of datastores under the datastore row. Click on one. Then click on the “info” link then the “vmfs” link. You will now see a page that lists a row called “HostForceMountedInfo”. If it says “unset” it is not force mounted on any host.

If it has a link called “forceMountedInfo” it is force-mounted somewhere.

But this is definitely a horrible way to look. This should be automated. PowerCLI to the rescue!

But this is definitely a horrible way to look. This should be automated. PowerCLI to the rescue!

I wrote a script to do it for you. Enter your credentials and vCenter and then let it run. It will spit out a report of any force mounted volumes and what hosts they happen to be force mounted to.

https://github.com/codyhosterman/powercli/blob/master/findforcemountedVMFS.ps1

The script basically takes all of the datastores in your vCenter and then iterates through them and checks the value. All of the MoB data can be found in the ExtensionData of the datastore object like below. Look familiar? It is the same path essentially from the MoB. I basically check to see if the returned value is true.

$datastore.ExtensionData.Info.Vmfs.ForceMountedInfo.Mounted

Your log file will look something like:

Looking for Force Mounted Volumes Connected to vCenter: vegeta-vcenter.csgvmw.local ---------------------------------------------------------------- The following datastore is force-mounted: VMFSVolumeTest The datastore is force-mounted on the following ESXi hosts csg-vmw-esx3.csgvmw.local csg-vmw-esx4.csgvmw.local ---------------------------------------------------------------- The following datastore is force-mounted: forcedmount The datastore is force-mounted on the following ESXi hosts csg-vmw-esx4.csgvmw.local

You can enter the log folder location to be wherever. The file will name itself with the date and time prefix and then forcemount.txt.

So long story short I do not recommend force mounted volumes.

2 Replies to “VMFS Snapshots and the FlashArray Part II: Why not Force Mount?”